All of this speaks to the volume and velocity (of change)

of the data – two of the trio of defining “v”s of Big Data. The third v is

variety and this is now encompassing much more than the internal data silos of

the enterprise. Increasingly decisions need to take account of the outside

world: competitors, news media, commentators and analysts, customer feedback,

social postings and tweets.

All of this speaks to the volume and velocity (of change)

of the data – two of the trio of defining “v”s of Big Data. The third v is

variety and this is now encompassing much more than the internal data silos of

the enterprise. Increasingly decisions need to take account of the outside

world: competitors, news media, commentators and analysts, customer feedback,

social postings and tweets.

|

Wednesday, May 23, 2012The Rise of the Connector - Part 2

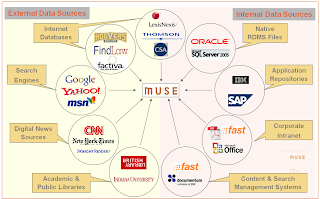

Connectors are the heart and soul of Federated Search (FS)

engines and with the rise in importance of FS in today’s fast paced, Big Data,

analyze everything world, they are crucial to smooth and efficient data

virtualization and flow. MuseGlobal

has been building Connectors, and the architecture to use them (the Muse/ICE

platform) and maintain and support them (the Muse Source Factory) for over 12

years. The people who design and build Connectors must be both computer savvy,

and also have a deep understanding of data and information and its myriad

formulations.

This second in the series of posts looks at the problems

arising as data is needed from outside the enterprise, and the complexities of

access and extraction that result. Not surprisingly, as a leading FS platform

Muse and its ecosystem are in the forefront of providing solutions to data

complexity problems in the modern world. (The first post considers the growing

importance of being able to access data from inside an organization.)

Part 2 A

broader perspective

All of this speaks to the volume and velocity (of change)

of the data – two of the trio of defining “v”s of Big Data. The third v is

variety and this is now encompassing much more than the internal data silos of

the enterprise. Increasingly decisions need to take account of the outside

world: competitors, news media, commentators and analysts, customer feedback,

social postings and tweets. All of this speaks to the volume and velocity (of change)

of the data – two of the trio of defining “v”s of Big Data. The third v is

variety and this is now encompassing much more than the internal data silos of

the enterprise. Increasingly decisions need to take account of the outside

world: competitors, news media, commentators and analysts, customer feedback,

social postings and tweets.

Most of these sources are also fleeting. Customer records

will last for years, a tweet is gone in 9 days. Even product reviews are only

relevant until the next version of the product is released. And there are

another couple of additional hurdles to jump to get this valuable “perspective”

data.

This data lives outside the enterprise. Some other person

or organization has control of it. And that means the old ETL trick of grabbing

everything is likely to be severely frowned on – especially if it is tried

every night. Commercial considerations mean that, if this data is valuable to

you, then it is valuable to others, and the owners will not let you have it all

for free. This means the strategy of asking for exactly what is needed is the

way to go. It takes less time everywhere, will cost less in processing and

transmission, will cost less in data license fees, and will not alienate

valuable data sources. So “sipping gently” is the way to go.

Yes, in the paragraph above you saw “fees” mentioned.

Once the commercial details have been sorted out, there is still the tricky

technical matter of getting access through the paywall to the data you need,

and are entitled to. Some services will provide some of the data you want for

free, but most will require authenticated access even of there is no charge

Those who are selling their data will certainly want to know that you are a

legitimate user, and be sure you are getting what you have paid for – and no

more.

For both of these considerations Federated Search

engines, especially in their harvesting mode allow all the “virtual data” to

become yours when you need it. Access control is one of the mainstays of the

better FS systems to ensure just this fair use of data. And gentle sipping for

just the required data is their whole purpose. Again a tool for the task

arises. MuseGlobal runs a Content Partner Program to ensure we deal fairly and

accurately with the data we retrieve from the thousands of sources we can

connect to, both technically and as a matter of respecting the contractual

relationship between the provider and consumer. We are the Switzerland of data

access – totally neutral and scrupulously fair, and secure.

Complexity everywhere

So now you are accessing internal and external data for

your BI reports. Unfortunately, while you might have a nice clean Master Data Managed

situation in your company, it is not the one the external data sources are

using (not unless you are Walmart or GM and can impose your will on your

suppliers, that is). And this means the analysis will be pretty bad unless you

can get internal product codes to match to popular names in posts and tweets.

There is a world of semantic hurt lurking here.

You need tools. Fortunately the Federated Search engine

you are now employing to gather your virtual data is able to help. Data

re-formatting, field level semantics, content level semantics, controlled

ontologies, normalized forms, content merging and de-merging, enumeration,

duplicate control, all these are tools within the FS system. They are powerful

tools and they are very precise, and they come with a health warning: “This

Connector is for use with this source only”.

Connectors are built, and maintained, very specifically

for a single Target. They know all about that target, from its communications

protocol to the abbreviations it uses in the data. Thus they produce the

deepest possible data extraction possible. And can deliver that data in a

consistent format suited to the Data Model and systems which are going to use

it. They are data transformers extraordinaire. This contrasts with crawlers at

the other end of the scale where the aim is to get a simple sufficiency of data

to handle keyword indexing.

This precision means that they are in need of “tuning”

whenever their target changes in some way. Major changes like access protocols

are rare, but a website changing the layout of its reviews is common and

frequent. Complexity like this is handled by a “tools infrastructure” for the

FS engine whereby testing, modification, testing again, and deployment are

highly automated actions, reducing the human input to the problem solving, not

the rote.

And now another wrinkle: some of the data needed for the

analysis is not contained in the records you retrieve, and the only way to

determine this is to examine those records and then go and get it. As a simple

example think of a tweet which references a blog post. The tweet has the link,

but not the content of the post. For a meaningful analysis, you need that

original post. Fortunately the better FS systems have a feature called

enhancement which allows for just this possibility. It allows the system to

build completely virtual records from the content of others. Think more deeply

of a hospital patient record. This will have administrative details, but no

financial data, no medical history notes, not results of blood tests, no scans,

no operation reports, no list of past and current drugs. And even if you gather

all this, the list of drugs will not include their interactions, so there could

be more digging to do. A properly configured and authenticated FS system will

deliver this complete record.

Analysis these days is more than just a list of what

people said about your product. It involves demographics and sentiment, and timeliness

and location. All these can come from a good analysis engine – if it has the

raw data to work from. Enhanced virtual records from a wide spectrum of sources

will give a lot, but making the connections may not be that simple. We

mentioned above “official” and popular product names and the need to reconcile

them. Think for a moment of drug names. Fortunately a good FS system can do a

lot of this thinking for you, and your analytics engine. Extraction of entities

by mining the unstructured text of reviews and posts and news article and

scientific literature allows them to be tagged so that the analysis recognizes

the sameness of them. Good FS engines will allow this to a degree. Better ones

will also allow that a specialist text miner can be incorporated in the

workflow and give each record its special treatment – all invisibly to the BI

system asking for the data.

Partnership at last

There is a lot of data out there, and a great deal of it

is probably very useful to you and your company. Using the correct analysis

engines and Federated Search “feeding” tools enables that data to be brought

together in a flexible, efficient, and accurate manner to give the information

needed for informed decisions.

Federated Search is still a very powerful and effective

way to search for humans, but it has grown up to be one of the most effective

tools for systems integration, the breaking down of corporate silos of data,

and the incorporation of data from the whole Internet into a unified, useable

data set to create real knowledge.

Muse is one of those tools which can supply the complete

range from end user fed search portals, to embedded data virtualization, and we

intend to keep up with the next turn of data events.

Monday, May 14, 2012The Rise of the Connector - Part 1 Connectors are the heart and soul of Federated Search (FS)

engines and with the rise in importance of FS in today’s fast paced, Big Data,

analyze everything world, they are crucial to smooth and efficient data

virtualization and flow. MuseGlobal

has been building Connectors, and the architecture to use them (the Muse/ICE

platform) and maintain and support them (the Muse Source Factory) for over 12

years. The people who design and build Connectors must have rich technical

expertise, and also have a deep understanding of data and information and its

myriad formulations. Connectors are the heart and soul of Federated Search (FS)

engines and with the rise in importance of FS in today’s fast paced, Big Data,

analyze everything world, they are crucial to smooth and efficient data

virtualization and flow. MuseGlobal

has been building Connectors, and the architecture to use them (the Muse/ICE

platform) and maintain and support them (the Muse Source Factory) for over 12

years. The people who design and build Connectors must have rich technical

expertise, and also have a deep understanding of data and information and its

myriad formulations.

This series of posts will look at the problems arising as

data grew in volume, spread across systems, moved outside the enterprise, and

became all important for the business intelligence which informs current

corporate decisions. Not surprisingly, as a leading FS platform Muse and its

ecosystem are in the forefront of providing solutions to data problems in the

modern world.

This first post considers the growing importance of being

able to access data from inside an organization. (The second post looks at the

problems arising as data is needed from outside the enterprise, and the

complexities of access and extraction that result.)

Part 1 Wanted: data from over there, over here

As the world of Big Data grows daily and the

importance of unstructured data becomes more evident to information workers and

managers everywhere, methods of accessing that data become critical to success.

Typically in an enterprise the majority of their data is

held in relational DBMS’s which are attached to the transaction systems that

generate and use it. These include HR, Bill of Materials, Asset Management

systems and the like. However for

managers to make strategic decisions on even this data is difficult, they need

to see it all at once. The analysis managers need is performed by a Business

Intelligence (BI) system, and it works on data held in its own (OLAP) database,

which is specially structured to give quick answers to pre-formulated

questions.

And here is the first problem: transaction systems with

lots of data, and an analysis system with an empty database. The solution: set up and run a batch process

for each working database that takes a snapshot of its data and transforms and

loads it into the OLAP database. This is ETL (Extract, Transform, Load) and is

where most big company systems are at the moment. The transaction systems have

no method of exporting the data, and the analysis engine just works from what

it has. This three part solution works and it works well, but it has some

problems.

Running a snapshot ETL on each working system at

“midnight” obviously takes time, and can be nearly a day old before the process

starts. This lack of “freshness” of the data didn’t matter too much 5 or even 2

years ago. It took so long to change systems as a result of the analysis that

data a day or so old was not on the critical path. But today’s systems can

adapt much more rapidly, and business decisions need to be based on hourly or

even by-the-minute data. (Of course, if you are in the stock and financial

markets then your timescale is down to micro-seconds, and you have specialist

systems tailored for that level of response.) So first we need to improve on our timing.

In order to do that we need to move from a just-in-case

operation to a just-in-time one. Rather than collect all the data once a day,

we need to be able to gather it exactly when we need it. Of course gathering it

overnight as historic data is still important and makes the whole process work

more smoothly and quickly as the just-in-time data is now only a few hour’s

worth and so can be processed that much quicker to get it into the BI system.

Now we have a two-legged approach: batch bulk and focused immediate updates.

Sounds good, but the ETL software for the batch work will not handle the real

time nature of the j-i-t data requests.

For a start the ETL process grabs everything in the

transaction system database – all customers, all products, all markets. But a

manager is generally going to ask for a report on a specific customer or

product. It would be endlessly wasteful to grab all that “fresh” data for all

customers, when only data for one is needed. So the j-i-t process has to be

able to query the transaction system, rather than sweep up everything. It is

also almost certain that the required report will need data from more than one

transaction system, but probably not all of them. ETL is not set up to do this;

it needs a system capable of directing queries at designated systems and

transforming those results. And, finally, the extracted data may well need to

be in a different format. After all now we are loading the data directly into

the Business Intelligence analysis engine for this report (for speed), and not

importing it to the OLAP database. This

means that the structure and semantics are all different.

Increasingly the tools of choice for these j-i-t

operations are Federated Search (FS) systems such as MuseGlobal’s Muse platform.

They can search a designated set of sources (transaction systems), run a

specific query against them, and then re-format the results and send them

directly to the analysis engine. Initial examples of FS systems are user

driven, but for this data integration purpose, the more sophisticated FS

systems are able to accept command strings and messages in a wide variety of

protocols, formats and languages and act on them, thus allowing the FS system

to act a s a middleman getting the data the BI engine needs exactly when it

needs it. Muse, for example, through its use of “Bridges” can accept command

inputs in over a dozen distinctly different protocols, and can query all the

major enterprise management suites in a native or standards-based protocol.

Should we move?

The need for speed of analysis and the volume of data

involved grows every day it seems. It takes time to extract all that data and to

build a big OLAP database just in case we want it. What’s more, building, and changing the

structure to adapt to changing analysis needs takes time – a lot of it.

So modern BI systems have moved to holding their database

in memory, rather than on disk, just so everything is that much faster. Modern

analysis engines, many based on the Apache project’s Hadoop engine, can handle a lot of data in

a big computer, and do it rapidly. Both Oracle (Exalytics)

and SAP (Hana)

have introduced these combined in-memory database plus analytics engine, and

others are coming. (See here

for an InformationWeek take on the war of words surrounding them.) These

engines can be rapidly configured (often in real time, through a dashboard) to

give a new analysis report – as long as they have the data!

Moving all that data from the transaction system takes

time, so the current mode is to leave it there and rely on real-time

acquisition of what is needed. This is of course much less disruptive, fresher,

and much more focused on the analysis at hand. This is not to say that

historical data is not important; it is, and it is used by these engines, but

the emphasis is more and more on that last bar on the graph.

So, again we need a delivery engine to get our data for

us from all the corporate data silos, get it when it is needed, and then deliver

it to the maw of the BI analytics engine. Once again the systems integration,

dynamic configuration and deep extraction technologies of a Federated Search

engine come to the rescue. Muse supports the real time capabilities, parallel

processing architecture, session management, and protocol flexibility to

deliver large quantities of data when asked for, or on a continuing “feed”

basis.

Wednesday, May 2, 2012Federated Search & Big Data gets bigger

The world of independent Federated Search is diminishing;

last week IBM announced that they will be acquiring Vivisimo.[1] There are a number of interesting aspects to

this, and the analysts have covered some of them [2],[3], but some particular quotes

from IBM itself and the analysts piqued my interest:

“The

combination of IBM's big data analytics capabilities with Vivisimo software

will further IBM's efforts to automate the flow of data into business analytics

applications …” [IBM]

“IBM also

intends to use Vivisimo's technology to help fuel the learning process for

their Watson

applications.” [IDC]

“Overall,

this is a very smart move for IBM, and it indicates that unstructured

information is going to play an increasingly

large role in the Big Data story…” [IDC]

All this shows the handling of structured and

unstructured information growing in importance.

What does IBM want Vivisimo for? It seems to all stem

round Big Data and the analytics that it can produce to enable better corporate

decisions. Of course, there’s also the

lovely teaser of a better performing Watson! Both Watson and Analytics massage

vast amounts of data and information to draw conclusions, assign values, and

create relationships. But, like all such endeavors, the quality of the result

depends critically on the quality of the incoming data. GIGO says it all!

Big Data analytics work very well with structured data,

where the “meaning” of each number or term is exactly known and can be

algorithmically combined with its peers, parents, siblings, and opposites to

give a visualization of the state of play at the moment or over time. Gathering

such data is a tedious process (hooray for computers!), but is not

intrinsically difficult. All that needs to happen is to set up a mapping from

each data Source to the master and let it run. The mappings are precise and the

process effective, but the volumes are vast and the time-to-repeat rather slow

for today’s fast paced world.

However, now add the fact that not everything you want to

know is held in those nice regular relational database tables, and the picture

looks far less rosy. Product reviews are unstructured, press releases are

vague, social comments are fleeting, and technical and legal documents tend to

be obtuse. But all these are vital if you want to make a really informed

decision. So bring in Federated Search to the rescue.

Federated Search is a real time activity. It is focused

on just what data or information is needed now. And it provides quality data.

It is directed to just those Sources needed for “this report”, and it analyzes

them in terms of known semantics so that the reviews, blogs, etc. mesh with the

numerical analytics, and then provide the essential “external view” of the situation.

And this is done right now, in real time. For the knowledge based systems (like

Watson) the FS Sources provide in-depth data pertinent to the current problem.

And if the Sources don’t have it, FS goes and finds it, thus allowing Watson (as an example) to add it to its knowledge base, and provide a

more informed opinion.

So that is why IBM is adding Federated Search to its

armory. What are the issues? In a word (or two): coverage and completeness.

All the Big Data systems use standardized access to the

massive databases of the corporation’s transaction and repository systems. Most

of these understand SQL or some other standard access language, and the

customization is a matter of reading a schema mapping table. That mapping table

is the same for every SharePoint or Exchange system (or similar), so once

created, it is easily deployed. These types of standardized accesses are often

referred to as “Indexing Connectors” because they extract enough data to enable

the content to be indexed and searched. (For more on this see a future post on

the deep differences between Connectors and Crawlers.)

Now, move to the world of web data and the complexity and

difficulty escalates enormously. The number

of formats and access methods multiplies almost to the point of one-to-one for

each Source. As an example look at the two press releases for this acquisition:

IBM’s is a press release, with an initial dateline, and no tags, Vivisimo’s [4]

is a blog post with tags and an author. The same Connector will not make sense

of both at the level of detail needed for a decision making analysis.

Add in the velocity of the data in the social media

(“velocity”, as you will recall, is one of the 3 “v”s that define Big Data –

Volume, Variety, Velocity) and the relatively slow to aggregate times of

conventional databases become a problem. Timing is an issue because of volume,

but also because applications have to analyze input data from users and other

sources, store it in their transactional database, and then the ETL function

has to extract from that database and move the data to the analytics database

or storage area. These are two stages, both relatively slow, that must be

batched together.

So, once moving from structured data to unstructured data,

and from the sheltered waters of the corporation to the rough seas of the Web,

a very different set of techniques is needed. And that is where Federated

Search (FS) comes in. This is the truly

hard, difficult part, and it’s where MuseGlobal shines. But first, some more information on what FS

is, and what it needs to do.

FS is immediate, which involves many synchronization and

“freshness” issues, but essentially solves the “velocity” problem by obtaining

data as it is needed. That is because FS is a “on demand” service. It is

brought into play just-in-time to get the data when needed, not in batch mode

to store it away just-in-case. Since it is used when needed it needs to be able

to target the Sources of interest right now. That means it is flexible and

dynamically configured, not painstakingly set up ahead of time and left alone.

Since it is a focused operation, targeting only the data

needed, it must be able to get the maximum out of each Source. This requires

two levels of complexity not common in other types of connectors or crawlers. These

Sources have specific protocols and search languages and often security

requirements. All these must be handled by the FS Connector so that the search

is faithfully translated to the language of the Source, and the results are

accurately retrieved. Second is getting the retrieved data into a useable form

(and format). This involves a “deep extract” involving record formats,

field/tag/schema semantics, content semantics, data normalization and

cleansing, reference to ontologies, field splitting, field combination, entity

extraction on rules and vocabularies, conversion to standard forms, enhancement

with data from third Sources, and other manipulations. None of this is

off-the-shelf processing where a single connector can be parameterized to work

with all Sources. So FS has started at the “single, deep” end of the spectrum

(crawlers are the epitome of the “broad, shallow” end) and builds Connectors to

the characteristics of each Source.

These Connectors bring focused, quality data, but they

come at a price. Vivisimo and MuseGlobal, and the other FS vendors build a very

special type of software – something that we know will eventually fail, when

the characteristics of the Source change. This needs a special dynamic

architecture to accommodate it. It needs very powerful ways to build Connectors

which can involve data analysts and programmers, as well as highly

sophisticated tools, such as the Muse Connector Builder. It needs a robust and

automated way to check for end-of-life situations, such as the Muse Source

Checker, and a highly automated build and deploy process – the Muse Source

Factory has been delivering automated software updates for 11 years now. Source

Connectors *will* stop working, and a big part of a viable FS ecosystem is

being able to get them back on line quickly and reliably. MuseGlobal has put together a data

virtualization platform with thousands of Connectors, because we know there’s a

one-on-one relationship with each data source if you want to connect to the

world out there. Figuring out the

unstructured data problem was one of our main goals at Muse from the very

beginning, some 11 years ago.

Of course, building Connectors in the first place is an equal

challenge, including the human element of dealing with a multitude of companies

publishing information and data. This is something all FS vendors have to

handle, and MuseGlobal chose to create a Content Partner Program about 10 years

ago where we talk regularly to hundreds of major Sources and content vendors.

Breadth of coverage of the Connector library is a major factor in “getting up

and running” time, and a major investment for the FS vendors. We believe that

Muse has one of the largest libraries with over 6,000 Source specific

Connectors, as well as all the standard API and protocol and search languages

ones for access where that is appropriate – but still with the “deep

extraction” which is the hallmark of Federated Search.

It is not an easy task to get right at a quality and

sustainable level, but a few vendors have produced the technology. MuseGlobal

is one – and Vivisimo is another.

IBM Analytics and Watson are set for a real quality

revolution!

Another analyst 's comments can be found on enterprise search blog at [6].

[5] http://vivisimo.com/technology/connectivity.html

[6] http://www.typepad.com/services/trackback/6a00d8341c84cf53ef016304c436dc970d

(*) You will need to be a subscriber to see the report

Wednesday, February 22, 201210 Trends – with Muse in the Middle Today's eWeek online version, 2012-02-20, contains a story, Cloud Computing and Data Integration: 10 Trends to Watch, within its "Cloud" section seemingly written around the capabilities of Muse. Or, perhaps MuseGlobal has been developing capabilities within its flagship software platform which fit the "waves of the future" now starting to break on the shore of today's business needs. Today's eWeek online version, 2012-02-20, contains a story, Cloud Computing and Data Integration: 10 Trends to Watch, within its "Cloud" section seemingly written around the capabilities of Muse. Or, perhaps MuseGlobal has been developing capabilities within its flagship software platform which fit the "waves of the future" now starting to break on the shore of today's business needs.The story is one of the familiar 10 slide shows in which they distill the wisdom of their in-house experts and those of external tech watchers – in this case some Gartner – and an interested developer, to gaze into a particular tech crystal ball. This one is focused on business needs and the cloud. In their own words: Increasingly, large organizations are discovering and using enterprise information with the objective of growing or transforming their business as they seek more holistic approaches to their data integration and data management practices. This is all in an effort to address the challenges associated with the growing volume, variety, velocity and complexity of information. ... intensifying expectations for cloud data integration and data management as a part of a company's information infrastructure. ... to enable a more agile, quicker and more cost-effective response to business needs. ...eWEEK spoke to Robert Fox, of Liaison Technologies. So what are the trends (details in the eWeek article)? And how does Muse fit in those trends? EAI in the Cloud Muse provides a cloud based service enabling standards based systems and those with proprietary messaging protocols to communicate with each other. This is a hub-and-spoke architecture, so once an application has its Connector written, it can communicate with ALL the other applications working with that Muse hub. B2C Will Drive B2B Agility Not so obvious here, but Muse has Connectors for access to the major, and quite a few minor, social platforms, so including their information and practices in the B2B world should be that much easier. Data as a Service in the Cloud Where service providers gather information and data from disparate sources, merge it, de-dupe it, cleanse it, and hand it on the service user, Muse is an obvious platform with all of these capabilities baked in from the first batch. Increasing numbers of data providers and the rise of the data brokers, means Muse has a niche as the functional platform for these new providers. Integration Platform as a Service "Integration platform as a service (iPaaS) allows companies to create data transformation and translation in the cloud ..." I couldn't have put it closer to the core of what Muse does, if I had said it myself! Master Data Management in the Cloud Aggregation, de-duplication, transformation, normalization, conformance to standards (local and International), consistency, identification of differences, enrichment, delivery – this could again be a description of a Muse harvesting service. Right here when needed. Data Governance in the Cloud Not directly a Muse function, but its transaction and processing logs make provenance and quality of data easier to report on and find the areas of weakness. Data Security in the Cloud Secure communications, a sophisticated range of authentication options, encryption when needed, and NO intermediate storage of the data means that Muse as a transaction service is not the weak security link in the chain. Business Process Modeling in the Cloud Not a core strength of Muse – can't win them all. But complex data manipulation processes can be handled through scripting within Muse. Connection to and from external service platforms means that they can be allowed to control the modeling and allow Muse to deal with the data. Business Activity Monitoring in the Cloud Tie Muse's aggregation and data cleansing to a link with your favorite BI service and monitoring became rather easier. Because Muse links to systems, it will work with virtually any BI system and place the raw data and analyses wherever they are needed for review. Cloud Services Brokerage If this sounds like your business (or one you want to get into), then a look at the Muse platform could save a lot of time and effort to get a superior service up and running. As the technology behind a CSB it takes some beating! So how did Muse do? Seven right on the money and three near misses seems like a pretty high score to us. Friday, November 4, 2011Will Hybrid Search get you better mileage? The recent news of the acquisition of Endeca by Oracle has triggered a number of research notes by analysts. In particular Sue Feldman of IDC talked of the rise of a Hybrid Search architecture. What is it? Is it good for you? Should you have one? And where does MuseGlobal stand? The recent news of the acquisition of Endeca by Oracle has triggered a number of research notes by analysts. In particular Sue Feldman of IDC talked of the rise of a Hybrid Search architecture. What is it? Is it good for you? Should you have one? And where does MuseGlobal stand?

Sue defined Hybrid Search: “Search vendors perceived this logical progression in information access a number of years ago, and several were at the forefront of creating new, hybrid architectures to enable access to both structured and unstructured information from a single access point.”

She went on to point out that the new hybrid architecture was more comprehensive; “The new hybrid architectures incorporate the speed and immediacy of search with the analysis and reporting features of BI.” and to find a justification for it – “the enterprise of the future will be information centered, and will require an agile,adaptable infrastructure to monitor and mine information as it flows into the company.”

Nick Patience and Brenon Daly of 451 Research went on to define The hybrid architecture’s capabilities in a bit more detail for Endeca’s version: “Endeca’s underlying technology is called MDEX, which is a hybrid search and analytics database used for the exploration, search and analysis of data. The MDEXengine is designed to handle unstructured data – e.g., text, semi-structured content involving data sources that have metadata such as XML files, and structured data – in a database or application.”

These definitions acknowledge the growing importance of information from everywhere, in unstructured as well as structured form, and the need to be able to access and analyze it in the modern enterprise. Information can, and does, come from anywhere – internal CRM systems, company independent blogs and forums, totally differentiated social media such as blogs and tweets, competitor websites, news services, and even raw data repositories. And it comes in the form of database records, blogs, emails, tweets, images and more. In the modern enterprise the need is to be able to analyze and use all this information immediately and easily.

Mining information from these disparate sources is not something that business analysts or product managers should be spending their time on. They need a reliable supply of the information where the semantics can be trusted, the information is up-to-date, and where the analyses can be set up easily. This is where the “plumbing” comes in. Two stages are involved: gathering the information, analyzing the information, then the user can take action on the intelligence provided. Companies like MuseGlobal take care of the first stage, and repository and BI companies take care of the second.

Some companies, like Endeca, take care of both stages, but then you are locked into both products from a single vendor, and it is not usual that they are both “best of breed”. So MuseGlobal concentrates on what it does best – gathering, normalizing, mining and performing simple analytics on data - and seamlessly passes the information on to your choice of Data Warehouse, Repository, BI, analytics engine – whatever best suit

e s the company’s needs.

What this means is that your organization sets up a Muse harvesting and/or Federated Search system once, pointing to the desired Sources of data, configures authentication where needed, and determines how the results are to be delivered to the analysis engine, specifying a choice of standards based or proprietary protocols and formats. Adding new Sources (or removing unwanted ones) is a point and click operation, and the Muse Automatic Source Update mechanism (and our programmers and analysts) ensures the connections remain working even when the sources change their characteristics – or even their address! About as close to “set and forget” as you can get in this changing world.

On schedule, or when requested by users, the data pours out of Muse in a consistent standardized format, with normalized semantics and even added enrichments and extracted “entities” or “facets” (Endeca’s terminology) and heads to the next stage of the information stack. This raw and analysed data input means the BI system (or whatever is in use) can now deliver more comprehensive analyses so staff can now concentrate on the information they have in front of them, not on seeking it in bits and pieces from all over the place.

And the information is not only from many sources, it is in varied formats. Forrester have just released a report which asks the questions “Have you noticed how search engine results pages are now filled with YouTube videos, images, and rich media links? Every day, the search experience is becoming more and more display-like, meaning marketers must align their search and display marketing strategies and tactics.” So the need to handle a complete range of media types and convoluted structures is becoming paramount or the received data will be just the small amount of text left over from the rich feast of the retrieved results. This is a topic for another blog, but suffice it to say that Muse can deliver the videos as well as the text.

Labels:

Analysis,

BI,

harvesting,

hybrid search,

unstructured data

Tuesday, August 23, 2011HP to acquire AutonomyThe news Hewlett-Packard announced on August 18th an agreement to purchase Autonomy. Autonomy has moved beyond its original enterprise search capabilities by utilizing its IDOL (Integrated Data Operating Layer) as the forward looking platform to provide an information bus to integrate other activities. It now handles content management, analytics, and disparate connectors, as well as advanced searching, to provide users access to data gathered from multiple sources and fitting to their needs. It has also moved aggressively to the cloud and currently nearly 2/3 of its sales are for cloud services. The impact on MuseGlobal This is a major endorsement for MuseGlobal’s technology, with its functionality to break down the barriers between silos of information in the enterprise as well as elsewhere. In the words of the IDC analysts (ref below): “…to a new IT infrastructure that integrates both unstructured and structured information. These newer technologies enable enterprises to forage for relationships in information that exist in separate silos…” They call this integration a “tipping point” and see that it is a means for a new lease of life for HP in the data management and services area. Again according to IDC it provides: “A modular platform that can aggregate, normalize, index, search and query, analyze, visualize and deliver all types of information from legacy and current information sources will support a new kind of software application” Although Autonomy will bring significant revenue and a large cloud footprint to HP, the major imagined benefit is seem in its ability to aggregate, normalize, analyze and distribute information across an enterprise. This is an area where MuseGlobal’s Muse system with its ICE “bus” provides a very similar set of functionality with its Connectors (6,000+ and growing), Data Model and semantically aware record conversion, and entity extraction analysis, providing similar functionality – if not content management or enterprise search. Muse is also very strong in record enrichment so that virtual records can be provided both ad hoc and on a regular “harvested” basis to connected processing systems – such as content management or enterprise search. Various commentators suggest that this move may “encourage” the other big players who HP competes against to have a look at acquisitions of their own. OpenText is the most noted possibility, though Endeca and Vivisimo get a mention. MuseGlobal is certainly in the same functional ballpark providing functionality for enterprises, universities, libraries, public safety, and news media. http://www.zdnet.com/blog/howlett/making-sense-of-hps-autonomy-acquisition/3345 HP to Acquire Autonomy: Bold Move Supports Leo Apotheker's Shift to Software

Labels:

Aggregation,

Analysis,

Autonomy,

Data Integration,

Endeca,

enterprise,

HP,

Information bus,

Silos,

Vivisimo

Wednesday, March 16, 2011Social is taking Search in a more Democratic direction...

Totally agree with this article published on Vator.tv.

We definitely saw this trend over the last few quarters as "social communities" emerged and relevant content started to show up in these communities without a lot of effort by an individual member. Our nRich product offers relevant content from trusted sources as well as its social rank. Gaming - like what JC Penny or Overstock tried - is not a big factor because other community members have already done the initial filtering. Check out the demo here. Monday, March 14, 2011Why the Basis of the Universe Isn’t Matter or Energy—It’s Data

Enjoyed reading this interview in Wired magazine with noted science author James Gleick.

He quoted Claude Shannon's views on information: A string of bits has a quantity, whether it represents something that’s true, something that’s utterly false, or something that’s just meaningless nonsense. He also made a very succint comment about how he (and perhaps more of us) should look at new technology: When people say that the Internet is going to make us all geniuses, that was said about the telegraph. On the other hand, when they say the Internet is going to make us stupid, that also was said about the telegraph. I think we are always right to worry about damaging consequences of new technologies even as we are empowered by them. History suggests we should not panic nor be too sanguine about cool new gizmos. There’s a delicate balance. Here's the link to the interview.

Monday, February 14, 2011NLP based Search in the middle of Man vs Machine battle

IBM's Watson takes on Jeopardy champions tonight.

Interesting article by Bruce Upbin Here's a brief video to give you the background. Looking forward to it. Tuesday, February 1, 2011IDC - Desperately Seeking Differentiation

I got a chance to attend an IDC event last week where they shared their predictions for the Software market and discussed how companies can establish differentiation in 2011 and beyond.

Key themes:

We'll share our perspectives in coming weeks and in the meantime would love to hear your predictions for 2011-2014.

Labels:

analytics,

cloud computing,

IDC,

mobile,

predictions,

social commerce

Saturday, January 29, 2011Gartner publishes "Top 10 Technology Trends in Information Infrastructure in 2011"

Key Trends that are more germane to areas where we can help our customers:

- Social Search - Content analytics - Content Integration You can download the entire report here. 2010 was very interesting. Companies started to realize that the "Voice of the Customer" can be heard in forums not controlled by the company. Can't control the format, can't control the timing, can't control the veracity - but - cannot ignore the content. If you want a leading indicator of customer sentiments about your products, brand or people - keep tabs on the social media forums - blogs, facebook, twitter, etc. The unstructured nature of this content has put a big question mark around the efficacy of existing IT strategy and investments in Master Data Management. These systems will need to evolve in 2011. As the content continues to grow exponentially, context-aware applications will become more useful for customers. In addition, content analytics will provide better guidelines for content producers, aggregators and consumers. Should make for an interesting 2011.

Tuesday, December 14, 2010Changing Times - Who's Reading, What and Where Excellent chart published today by eMarketer that shows the different platforms preferred by different age groups to get news. IMO, the duo-platform mode (both traditional print and new digital platforms) is indicative of the transition phase that we are going through right now. In an year or two, more of us (even if we are not 21 years old) will let drop the habit of "traditional" platforms. I am trying to kick the habit of reading a newspaper in the morning and simply catching up on the news on my laptop, iPhone or my wife's iPad. (iPad is by far the most convenient platform - especially before the morning coffee kicks in). Take the Wikileaks story. Its almost a waste of time to keep abreast of this interesting story in a newspaper. The videos, documentaries, documents themselves - compel you to follow the story from your nearest browser. Our increasing tendency to get and share news with our trusted friends will only drive us further away from "static" traditional platforms like newspapers and magazines. What do you think - are you ready to "kick" the habit? I am trying hard. Thursday, December 9, 2010Google Ready to Move Microsoft Exchange Data to the Cloud

Here you go - More enterprise content being moved into the cloud - this Google luring Exchange data. Service isn't free - $25 per user per year and $13 per user for existing Postini customers.

Database.com - Makes Sense

Kudos to Marc Benioff @ Salesforce.com on launching another cloud product - Database.com (read the WSJ article here). Great job of packaging existing capabilities into a broader offering.

It's simple and it makes sense. Considering everything else that is being offered in the cloud, it is surprising that a fundamental building block in an IT stack - a database - didn't get there first. The traditional companies probably didn't want to ruin their existing license and support revenues by pushing a cloud offering. The newbies probably didn't have the capital or market nous to create a buzz. Marc has both and right now he is running laps around the competition. See the intro video here. The other reason I like the announcement - we get a chance to help our customers move their content into database.com, or out of it, or reference it without using any of it. The federated content model is here to stay and database.com will simply help customers store their content in logical repositories - across different public and private clouds. It will be interesting to dig further into the capabilities and limitations of databse.com. The data model under Salesforce.com has been evolving fairly rapidly from supporting simple SFA applications and to supporting fairly complex enterprise processes and content structures. However, the primary challenge for enterprise today is dealing with the unstructured data they own and all the relevant content that is generated outside their control and outside their systems. It doesn't matter whether database.com is the answer to all of this today or ever. Salesforce is facilitating the massive migration of cloud based applications - and that is good enough. As we move forward, accessing and virtually aggregating these content sources - without physically moving the content - will become the norm. Would love to understand what Google is thinking about all this. They have taken a very clever route to capturing enterprise content with Google Docs and Google Apps - all searchable and all seemingly liberated from legacy "database" norms. Looking forward to 2011 and more content in the cloud... Monday, November 15, 2010WTD about TMI?

TMI - Too Much Information, Too Much Content, Too Much Data - are all here to stay with us. There will be more of everything that we will pull and more of everything that will be pushed to us.

On my BART ride last week (yes - very green of me), I read an interesting article (http://bit.ly/cHG21g) in Bloomberg Businessweek magazine on RIM CEOs failing to communicate their vision/strategy to the market. 99.9% of all the CEOs in the world will come out 2nd best when compared with Steve Jobs, but there was a particular comment made by Jim Balsille (RIM Co-CEO) that made me realize that RIM's market share and mind share in smartphones could erode faster than Motorola's or Nokia's: Balsille thinks the world is wrong about apps. Many are justApple has 250,000 apps because most of them are serving a critical need of presenting content in a form that can be consumed very easily - especially on a mobile device. Yes - you can browse the web and search for whatever you need - but that does not help the TMI syndrome. If anything it exacerbates it. The apps are making it easy for iPhone, iPad or Android users to filter and consume the content available all over the Web. Even Google has embraced the app paradigm - even though "googling" for content is good for Google's ad revenues. Gus Hunt, CTO for CIA, who is building a "peta" scale infrastructure to handle the data and computing requirements said at Cloud Expo last week that he wants any data to be used by any application. He does not want to invest in building applications that are dependent on a particular data set or a particular data set being created for a specific application. The days of consolidating all the data into a single repository are long gone and never coming back. Question is What To Do about TMI? First - Leverage technology that can reference data from any source in any format. It isn't feasible to try and standardize the legacy content repositories. Data will stay federated, so your content integration strategy should account for it as well. Speed and agility in accessing new content sources is a competitive advantage for today's businesses. Second - Ensure that data cleansing can be automated. This will allow you to work with incomplete or partial data - especially when working with a large number of data sources. Third - Focus on normalizing the aggregated data, so that the consuming applications can work independent of data sources. This will allow you to serve content to a variety of consuming applications and end devices - especially the constrained mobile devices that need additional filtering or formatting. This will also allow the content creators to focus on creating content and not to worry about the device/platform battles that are being waged on the other side of the value chain. More to come on the innovation in these areas..... Tuesday, June 16, 2009Building Success in a Flat Economy: Beating the Vendor Renegotiation Game It's certainly no news these days that the economy could be a lot better, but a recent survey from Gartner points to both the scale of the cutbacks affecting enterprise technology providers and how they are being hit by those cutbacks. According to Gartner 42 percent of the surveyed CIOs had cut back their budgets in Q109, and 90 percent of CIOs had made budget changes opting for cutbacks. While the average overall decline was about 4.2 percent among the surveyed CIOs, those cutting back were averaging about 7.2 percent. It's certainly no news these days that the economy could be a lot better, but a recent survey from Gartner points to both the scale of the cutbacks affecting enterprise technology providers and how they are being hit by those cutbacks. According to Gartner 42 percent of the surveyed CIOs had cut back their budgets in Q109, and 90 percent of CIOs had made budget changes opting for cutbacks. While the average overall decline was about 4.2 percent among the surveyed CIOs, those cutting back were averaging about 7.2 percent.Of more concern to information services providers, though, is how these cutbacks are impacting the vendors servicing these CIOs and their organizations. The two most popular methods for dealing with budget cutbacks this year mentioned in the Gartner survey have been to reduce headcount and to renegotiate contracts with vendors. So although information services providers may not be getting the axe, they're certainly getting that pared-down feeling in many instances. Of course, their clients still expect them to deliver outstanding service for those lower prices. Goodbye margins, hello aspirin. It's going to be a bumpy stretch. There's no magic wand that can help an information services company avoid these cutbacks, but there are strategies that you can deploy which just might help to make the difference between pain and gain during challenging times. One key strategy is to use cutbacks as an opportunity to open a dialogue with your customers about aggregating information services. With different departments and work roles using different information services, oftentimes with overlapping functions and content sources, helping your customers to reduce the number of interfaces into those services can wind up being a cost-saving move for them that may create new revenue opportunities for you. In other words, instead of customers being forced to choose between one information service over another, help them to deliver the information from as many of them as possible under one more easily supported service. Now, wouldn't it be nice if that aggregator who helped your client to solve their budget problems while improving information access was your company? Well, it certainly can be you - especially if you're a MuseGlobal OEM partner. MuseGlobal's MuseConnect content integration services enable any information technology and services provider to provide well-integrated access to any number of searchable information sources rapidly and reliably. Instead of looking at all of the other platforms in use at your clients as potential competitors for budget, they can become the sources of content that can be fed into an integrated solution that your own platform champions. You can use MuseConnect to bring content from other platforms into your own platform using our exclusive Smart Connector technologies or create a custom interface that combines information from both your platforms and others exactly the way that your clients want to see it. And with MuseConnect's built-in management of network security, user administration and content source updating the complexities of bringing multiple platforms under one access point will turn out not to be so complex at all. Client support costs go down, their productivity goes up. So when push comes to shove on which platforms will get the lion's share of whatever budget is left, you can put yourself at the head of the line for getting a fat cut of that budget. Our MuseConnect technologies are a key enabler for such dramatic turns because of their ability to provide reliable content integration at the drop of a hat. With more than 6,000 pre-built and easily configured Smart Connectors at your disposal, MuseConnect makes it easy to move rapidly from "We can help you" discussions to "We're ready to show you" discussions that can help to turn around budget discussions from a paring down to a win-win save - or more. And as always, since our Smart Connectors are maintained around the clock as a part of your MuseConnect service, your support costs for these victories are factored in easily to your bottom line. So as you're wrestling with clients who are trying to eke their way through tougher times, remember that there can be great opportunities in these times to turn the tables on your competitors and to be the first to step forward as the aggregation solution that helped to save your clients money and to wind up improving information access all at the same time. Hopefully that will be enough to get your clients through the next year or so - and to put you in the driver's seat for when times get better.

Labels:

CIO,

content connectors,

content integration,

economy,

gartner,

museglobal,

survey

Monday, June 1, 2009Partnering with the Real-Time Web, 140 Characters (or more) At a Time One of the great challenges in confronting the value of the open Web is that many of the most popular and timely sources of information are not being produced by traditional publishers. Who would have thought, for example, that a simple text messaging service like Twitter would explode into a major conduit for alerting people to breaking news and insights produced by millions of people? Yes, there's the "I just put peanut butter on my sandwich" kind of "breaking news" in that mix, but then, if you make peanut butter, perhaps that's news if you're trying to understand the quickly shifting world of consumer habits. Of course there's also a healthy mix of other sources in the stream of Twitter messages, including breaking headlines and comments from major news organizations, politicians, celebrities and just about anyone else who can pump out information 140 characters at a time on a moment's notice on their PCs and mobile devices. If Twitter represents the cutting edge of "real-time" information on the Web, then it's an edge with a powerful force behind it. One of the great challenges in confronting the value of the open Web is that many of the most popular and timely sources of information are not being produced by traditional publishers. Who would have thought, for example, that a simple text messaging service like Twitter would explode into a major conduit for alerting people to breaking news and insights produced by millions of people? Yes, there's the "I just put peanut butter on my sandwich" kind of "breaking news" in that mix, but then, if you make peanut butter, perhaps that's news if you're trying to understand the quickly shifting world of consumer habits. Of course there's also a healthy mix of other sources in the stream of Twitter messages, including breaking headlines and comments from major news organizations, politicians, celebrities and just about anyone else who can pump out information 140 characters at a time on a moment's notice on their PCs and mobile devices. If Twitter represents the cutting edge of "real-time" information on the Web, then it's an edge with a powerful force behind it.Yet for all of the recent excitement about Twitter, it's hardly the only source of important real-time information from online sources. The truth of the matter is that any information source can be a real-time source of information - if it's information that's important to you as soon as it's updated and you can access it in time for that information to be immediately relevant. It's important to factor in access to sources like Twitter into your strategy for real-time information awareness, but these other sources of information - what you might call "the dark real-time Web" - can provide you with insights and advantages that others will be missing in their search for real-time relevance. If it's important to your decision-making process and it's out there, you need it now. This concept of engineering real-time relevance is nothing new to MuseGlobal, of course. Our MuseConnect technologies have been used for harvesting on-demand information from our thousands of Smart Connectors for more than a decade. MuseConnect is particularly well suited for extracting real-time relevance from any number of content sources because it's been designed from the outset to pass through updates and alerts from the freshest and most relevant information it can find from any content source. Instead of building a massive database of potentially out-of-date information from many sources, our Smart Connectors can go out and get fresh information from each and every source that matters to you and deliver it to you on any information platform where it's needed in whatever normalized data formats suit your operations. In other words, it's important to have software that monitors Twitter if you want to be aware of real-time opinions, news and events, but why stop there? Other social media services, videos, GPS-enabled applications, corporate Web sites, subscription databases, government and public databases, catalogs and, most importantly, your clients' own internal databases - all of these are potential sources of real-time relevance for the services that publishers and technology companies provide to clients. If you wait for a search engine crawl to find the nuggets of value in those sources that you need, they could be hours, days or more out of date, and that's if they're even crawled, of course. If you rely only on data harvesting tools, you could find yourself waiting for those tools to be repaired when a source's data formats change and break down your ability to provide real-time relevance, while missing out on thousands of sources that are beyond the reach of typical harvesters. So getting the most valuable information in real-time is not just as simple as parking a Twitter feed into a piece of software. It's about getting every bit of the freshest and best-organized information that you can use in the right format at the right time on the right platforms, day in and day out - and being able to return the favor of providing fresh updates to the sources that supply your own platforms. That's a much, much bigger picture for real-time than many publishers and technology companies may have put their arms around, but it's the picture that MuseGlobal's OEM partners have been embracing for years. Because we sell our MuseConnect technologies as a service, it's a picture that keeps on getting refreshed. We keep our partner's content connectors up-to-date every day, so that their thousands of installations around the world can have real-time information all the time. The buzz on "real-time" is bound to build as more and more applications are built to take advantage of emerging fast-updating sources like Twitter, which means that you have to be ready to focus on the final products of your real-time automated editorial efforts as much as possible - instead of the infrastructure that brings that content from any number of sources of real-time information updates to your platform. And that's where MuseConnect and its Smart Connector technology comes in. We connect to the freshest sources of information and make sure that keeping connectivity to real-time information is the least of your concerns.

Tuesday, May 19, 2009Getting Ready for the Bounce: How Do You Turn Opportunities for Innovative Services Into Revenues? The author Mark Twain once scoffed at reporters who had been writing stories about his having passed away by saying at a public appearance, "The rumors of my death have been greatly exaggerated." Well, perhaps the buzz that the global economy is dying is also a bit premature, as well. While things are still pretty tough out there, we're picking up a lot of signs recently that companies are starting to bounce back and seize great opportunities for information products and services that beckon around the world. The author Mark Twain once scoffed at reporters who had been writing stories about his having passed away by saying at a public appearance, "The rumors of my death have been greatly exaggerated." Well, perhaps the buzz that the global economy is dying is also a bit premature, as well. While things are still pretty tough out there, we're picking up a lot of signs recently that companies are starting to bounce back and seize great opportunities for information products and services that beckon around the world. The key to many of these opportunities, though, is the time and the cost of execution. With lean product development staffs and leaner client budgets, getting a client to "yes" means being able to turn ideas into actions as quickly and as cost-effectively as possible. In the information industry, this often means that you have to be able to demonstrate a new concept for a product or service as rapidly as possible. The good news for many information technology and services companies is that today's software development environments enable companies to cobble together some great new application demos very quickly. A common sticking point, though, is that the content that would make those demo apps look best is not quite what clients were looking for - or just not available. Canned data and pages may get you past a few quick moments with a client, but when you want them to pull the string on the revenue-generating phase of a client relationship it generally takes more than that to build a high level of confidence. This is where MuseGlobal has really helped our clients to shine in recent years, and even more in today's tough economy. Our MuseConnect content integration technologies offer information services developers truly amazing turnaround times in assembling content from any number of internal and external information sources. Having developed more than 6,000 Smart Connectors for MuseConnect through the years means that your ideas for assembling as much valuable content as possible in a new or enhanced information product or service can appear more rapidly than you may imagine. Since MuseGlobal maintains its own development staging services for our clients, we have the ability to start streaming updates from content sources to your development environment whenever you're ready to receive them. Sometimes within just a few hours of having spoken about an idea your development staff can be working with live data from the key information sources that they need to accelerate their efforts. This can make an enormous difference in jump-starting innovation and product development from many perspectives. Instead of waiting weeks or months to get content from databases, subscription services, file services and search engines flowing into prototype applications, you can eliminate content connectivity and availability as a bottleneck for developing and testing new software and services. Besides shortening the product development cycle in general, it also means that you'll spend more time looking at "real-world" information flowing through your new applications that will put it through its paces. The result will be more reliable and useful applications that encounter fewer surprises once they hit your clients' production environments. Time-to-market issues get solved more rapidly and efficiently, which means that more revenues come more quickly. In an economy in which anything that can help a sales cycle to shorten is critical to meeting sales goals, you might say that MuseGlobal's Smart Connectors are like found money. Once you do sell and install your new applications, the even better news is that MuseGlobal Smart Connectors will continue to be reliable for you and your clients all on their own. That's because MuseGlobal delivers its Smart Connectors as a service to you through MuseConnect's unique content integration architecture. Whether it's pre-existing content source connectors or connectors tailored to your specific needs, MuseGlobal will be testing your content connectors constantly - and ensuring that they keep on working for your clients. Instead of client teams having to focus on maintenance issues that are annoying your key accounts they can be focusing on the next great opportunities for your products and services to be solving valuable problems. There are no perfect answers to bouncing back from the effects of a tough economy, of course, but we've discovered in these times that our MuseConnect OEM content integration solutions can help more answers to be tried, tested and delivered to more of our clients' customers - an advantage that can turn a tough sales environment into one in which you can have the edge through rapid innovation. I hope that we can help you and your teams to see how MuseGlobal technologies and services can put your own organization on the path to innovative information products and services soon - and to keep them running smoothly and cost-effectively for years to come.

Labels:

content,

content connectors,

content integration,

information,

museglobal,

sales,

technology

Monday, April 27, 2009Oracle Gets Some Sun: Why Platforms Need More than Technology There was a time when Sun Microsystems was a rising, shining force in Silicon Valley, parlaying its variation of the Unix operating system running on its proprietary hardware into quite a tidy empire of servers, workstations and software services for many major enterprises and Web publishers. Topping off Sun's holdings was the promising Java platform-independent applications environment, which enabled more than a decade's worth of software on many platform's beyond Sun's own. And recently Sun's acquisition of MySQL gave them a key component in Web-oriented database development. Great stuff, but not great enough to drive Sun into a more competitive position against giants such as Microsoft and IBM. There was a time when Sun Microsystems was a rising, shining force in Silicon Valley, parlaying its variation of the Unix operating system running on its proprietary hardware into quite a tidy empire of servers, workstations and software services for many major enterprises and Web publishers. Topping off Sun's holdings was the promising Java platform-independent applications environment, which enabled more than a decade's worth of software on many platform's beyond Sun's own. And recently Sun's acquisition of MySQL gave them a key component in Web-oriented database development. Great stuff, but not great enough to drive Sun into a more competitive position against giants such as Microsoft and IBM. It makes perfect sense, then, for Oracle to have picked up Sun to offer them more leverage against those same giants. With cloud computing and other forces commoditizing network server hardware, the pressure is on for most technology companies to add more value further up the technology "stack" as cost-effectively as possible. Be it MySQL, Oracle's own database offerings or a cross-platform programming environment such as Java, it takes a very full kit of technology offerings these days to be able to devise solutions that command premium investments from most enterprises. Sun's traditional hardware and systems assets may be of some use to Oracle to protect its databases running on those platforms, but the far greater value to Oracle is to give it components that will enable it to attach its databases and value-add services to the applications development environments that allow for rapid, cost-effective services development. In other words, having excellent software is a good thing, but these days it's more important to help your clients to have excellent solutions. Increasingly this means ensuring that you have every resource at your disposal that could contribute to those solutions. That includes, of course, access to all of the content sources that they need to deliver the right information at the right time in the right applications on the right platforms. It's simply no longer possible to capture all of those content sources in a single database or Web server. There are too many legacy platforms and databases and too many new and rapidly changing technologies collecting new sources of content to risk any technology company's future on a handful of content assets. Oracle's purchase of Sun Microsystems is a key acknowledgment that the future of computing technology makes effective content integration an absolute necessity. No wonder, then, that MuseGlobal finds itself a certified Oracle partner. Our MuseConnect for Oracle Secure Enterprise Search uses our world-leading OEM content integration technologies to enable content from key databases, subscription information services and search engines to integrate with all of the sources already accessible via Oracle's own Secure Enterprise Search software. The MuseGlobal technologies behind MuseConnect for Oracle Secure Enterprise search have enabled hundreds of technology companies and publishers to connect their technology platforms to the content sources that they need at thousands of installations around the world. With over 6,000 Smart Connectors to a very wide variety of content types and sources, MuseGlobal enables any content-delivering platform to push its way up the technology "stack" to higher levels of value rapidly and very cost-effectively. Certainly Oracle's acquisition of Sun bodes well for a marketplace that needs more powerful and cost-effective publishing solutions that can be delivered on a wider array of enterprise, home and mobile platforms than ever before. It's nice to know, though, that MuseGlobal's content connector solutions are a key component in just about every imaginable major information publishing platform available today. I expect that we'll be seeing a lot more healthy competition amongst the technology giants with this key move by Oracle. In the meantime, we're glad here at MuseGlobal that our rapidly deployed and highly cost-effective Smart Connector content solutions help them all to compete more effectively.