Connectors are the heart and soul of Federated Search (FS)

engines and with the rise in importance of FS in today’s fast paced, Big Data,

analyze everything world, they are crucial to smooth and efficient data

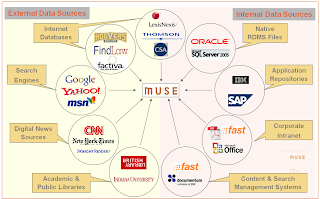

virtualization and flow. MuseGlobal

has been building Connectors, and the architecture to use them (the Muse/ICE

platform) and maintain and support them (the Muse Source Factory) for over 12

years. The people who design and build Connectors must be both computer savvy,

and also have a deep understanding of data and information and its myriad

formulations.

This second in the series of posts looks at the problems

arising as data is needed from outside the enterprise, and the complexities of

access and extraction that result. Not surprisingly, as a leading FS platform

Muse and its ecosystem are in the forefront of providing solutions to data

complexity problems in the modern world. (The first post considers the growing

importance of being able to access data from inside an organization.)

Part 2 A

broader perspective

All of this speaks to the volume and velocity (of change)

of the data – two of the trio of defining “v”s of Big Data. The third v is

variety and this is now encompassing much more than the internal data silos of

the enterprise. Increasingly decisions need to take account of the outside

world: competitors, news media, commentators and analysts, customer feedback,

social postings and tweets.

All of this speaks to the volume and velocity (of change)

of the data – two of the trio of defining “v”s of Big Data. The third v is

variety and this is now encompassing much more than the internal data silos of

the enterprise. Increasingly decisions need to take account of the outside

world: competitors, news media, commentators and analysts, customer feedback,

social postings and tweets.

Most of these sources are also fleeting. Customer records

will last for years, a tweet is gone in 9 days. Even product reviews are only

relevant until the next version of the product is released. And there are

another couple of additional hurdles to jump to get this valuable “perspective”

data.

This data lives outside the enterprise. Some other person

or organization has control of it. And that means the old ETL trick of grabbing

everything is likely to be severely frowned on – especially if it is tried

every night. Commercial considerations mean that, if this data is valuable to

you, then it is valuable to others, and the owners will not let you have it all

for free. This means the strategy of asking for exactly what is needed is the

way to go. It takes less time everywhere, will cost less in processing and

transmission, will cost less in data license fees, and will not alienate

valuable data sources. So “sipping gently” is the way to go.

Yes, in the paragraph above you saw “fees” mentioned.

Once the commercial details have been sorted out, there is still the tricky

technical matter of getting access through the paywall to the data you need,

and are entitled to. Some services will provide some of the data you want for

free, but most will require authenticated access even of there is no charge

Those who are selling their data will certainly want to know that you are a

legitimate user, and be sure you are getting what you have paid for – and no

more.

For both of these considerations Federated Search

engines, especially in their harvesting mode allow all the “virtual data” to

become yours when you need it. Access control is one of the mainstays of the

better FS systems to ensure just this fair use of data. And gentle sipping for

just the required data is their whole purpose. Again a tool for the task

arises. MuseGlobal runs a Content Partner Program to ensure we deal fairly and

accurately with the data we retrieve from the thousands of sources we can

connect to, both technically and as a matter of respecting the contractual

relationship between the provider and consumer. We are the Switzerland of data

access – totally neutral and scrupulously fair, and secure.

Complexity everywhere

So now you are accessing internal and external data for

your BI reports. Unfortunately, while you might have a nice clean Master Data Managed

situation in your company, it is not the one the external data sources are

using (not unless you are Walmart or GM and can impose your will on your

suppliers, that is). And this means the analysis will be pretty bad unless you

can get internal product codes to match to popular names in posts and tweets.

There is a world of semantic hurt lurking here.

You need tools. Fortunately the Federated Search engine

you are now employing to gather your virtual data is able to help. Data

re-formatting, field level semantics, content level semantics, controlled

ontologies, normalized forms, content merging and de-merging, enumeration,

duplicate control, all these are tools within the FS system. They are powerful

tools and they are very precise, and they come with a health warning: “This

Connector is for use with this source only”.

Connectors are built, and maintained, very specifically

for a single Target. They know all about that target, from its communications

protocol to the abbreviations it uses in the data. Thus they produce the

deepest possible data extraction possible. And can deliver that data in a

consistent format suited to the Data Model and systems which are going to use

it. They are data transformers extraordinaire. This contrasts with crawlers at

the other end of the scale where the aim is to get a simple sufficiency of data

to handle keyword indexing.

This precision means that they are in need of “tuning”

whenever their target changes in some way. Major changes like access protocols

are rare, but a website changing the layout of its reviews is common and

frequent. Complexity like this is handled by a “tools infrastructure” for the

FS engine whereby testing, modification, testing again, and deployment are

highly automated actions, reducing the human input to the problem solving, not

the rote.

And now another wrinkle: some of the data needed for the

analysis is not contained in the records you retrieve, and the only way to

determine this is to examine those records and then go and get it. As a simple

example think of a tweet which references a blog post. The tweet has the link,

but not the content of the post. For a meaningful analysis, you need that

original post. Fortunately the better FS systems have a feature called

enhancement which allows for just this possibility. It allows the system to

build completely virtual records from the content of others. Think more deeply

of a hospital patient record. This will have administrative details, but no

financial data, no medical history notes, not results of blood tests, no scans,

no operation reports, no list of past and current drugs. And even if you gather

all this, the list of drugs will not include their interactions, so there could

be more digging to do. A properly configured and authenticated FS system will

deliver this complete record.

Analysis these days is more than just a list of what

people said about your product. It involves demographics and sentiment, and timeliness

and location. All these can come from a good analysis engine – if it has the

raw data to work from. Enhanced virtual records from a wide spectrum of sources

will give a lot, but making the connections may not be that simple. We

mentioned above “official” and popular product names and the need to reconcile

them. Think for a moment of drug names. Fortunately a good FS system can do a

lot of this thinking for you, and your analytics engine. Extraction of entities

by mining the unstructured text of reviews and posts and news article and

scientific literature allows them to be tagged so that the analysis recognizes

the sameness of them. Good FS engines will allow this to a degree. Better ones

will also allow that a specialist text miner can be incorporated in the

workflow and give each record its special treatment – all invisibly to the BI

system asking for the data.

Partnership at last

There is a lot of data out there, and a great deal of it

is probably very useful to you and your company. Using the correct analysis

engines and Federated Search “feeding” tools enables that data to be brought

together in a flexible, efficient, and accurate manner to give the information

needed for informed decisions.

Federated Search is still a very powerful and effective

way to search for humans, but it has grown up to be one of the most effective

tools for systems integration, the breaking down of corporate silos of data,

and the incorporation of data from the whole Internet into a unified, useable

data set to create real knowledge.

Muse is one of those tools which can supply the complete

range from end user fed search portals, to embedded data virtualization, and we

intend to keep up with the next turn of data events.

222 comments:

1 – 200 of 222 Newer› Newest»Nice! And I would also like to draw your attention to data room due diligence and to cloud technology in general.

After reading your post I thought you would also enjoy reading this article.

Really mean a lot. Thanks for sharing your valuable post. I like the way of the elaboration of your topic so please keep informative posting. I am waiting for your next post, so please give us a link to your next post here and visit HP Printer Support for HP help. You may also get help for the printer through the HP Printer Offline Fix !! HP Printer Offline Windows 10

This is a great article. It gave me a lot of useful information. thank you very much. We are also a service provider that deal in the assignment writing help sector. So, the students struggling to write their college assignments can opt for our online dissertation formatting service and can get a quality assignment written from us.

big data is the field that treats ways to analyze, systematically to provide exactly the same information. as the name shows the big data in which the data sets are too large or complex to be dealt with. if we talk about the current usage the team big data tends to refer to the use of predictive analytics, user behavior analytics.

i have received an order to do my homework for me cheap that's a reason i have a knowledge about this topic.

There are many companies in the market which is manufacturing printers but HP is one of the best of them. Millions of people around the world using HP printer to complete their printing task. You should contact HP Printer Tech Support Phone Number to overcome printer issues like paper jam, printer offline, print head and other issues.

When you try to start up HP laptop, you fail to start. It shows that your device has some kinds of technical errors. To repair your HP laptop technically, you need to follow some technical steps suggested by technical experts. We are an independent third party tech support service provider, providing unlimited technical support or help for HP laptop users in very nominal charges. Our technical support professionals are highly trained and experienced to resolve startups issue fully from the origin. Our HP laptop support team is available 24/7 to help you for any type of technical issues.

There is no doubt that AOL Desktop Gold is world-wide popular software that is designed to lessen the workload of employees. But, due to some technical glitches, AOL Gold may start to behave in an improper way. It may show sudden freezing, start-up issues, unexpected crashing and many more issues which you haven’t even heard of in your entire life. However, you can easily eliminate these nasty errors by taking aid from highly experienced experts. In order to take their guidance, you need to put a call at AOL Desktop Gold support number which is accessible throughout the day and night.

Just dial 1-877-916-7666, the tech support number of Assistance for All. We are the most popular tech support agency operating around the country.

We help to find solutions for HP Printer and PCs errors, Contact HP Customer Service and get expert guidance to download and install HP drivers, manuals and troubleshooting steps.

Refer the Articles -

HP Support Assistant | HP Printer Assistant | HP Wireless Assistant | HP Printer Support | HP Laptop Support | HP Printer Tech Support Phone Number | HP Printer Offline | HP Print and Scan Doctor

The article has actually peaks my interest. I am going to bokmarks your web site and maintain checking for brand new information.

Dell Customer Service

Reinstall Kasperksy Total Security :

Reinstall Kasperksy Total Security helps you to reinstall & safety for kaspersky anti virus . It safeguard against unsafe internet connectivity or external devices like powerful engine,better usability,esily accessible on list of devices, and greater protection against online dangers.

Happy to be a part of this blog post.Hope every readers will enjoyed this post.Good Job.Keep going on. Expect more from here again. Essay Writing Services

Does your Epson printer have malfunction? In this situation, you can go to approach for Epson support to look out the expected change in it. Definitely, you have approach on the right cover page if certain technical difficulties arise in it. Any user is not compelled to accept its technical hindrance. In urgent situation, you can browse our web portal. You can easily by pass this message by following the below mentioned steps or dialing Epson error code 0xf1. Here, you will get the suitable guidance from highly educated experts to resolve this error, when you use an Epson wireless printer setup and several errors pop-up. These errors are mainly crops up due to improper installation or software issues.

QuickBooks Error 6105 is one such error. In this article, we have given you the tips to resolve this error, but prior to this, you need to know about this error and learn about the roots of this issue.

error like 80004005 or 80004003

We are independent HP Customer Service Support team in USA. Call HP Printer Assistant Number if your facing any problem in HP Devices.

Thumbs up for the great information you share on this post.

QuickBooks Error Code 80070057

How to Fix QuickBooks Error Code 15270

Most of the software does not work correctly due to the incorrect setup and installation. Sometimes user also face error with Norton antivirus due to incorrect setup. If you don’t know how to do Norton antivirus setup the go to norton.com/setup to get setup guide to secure your device from threats.

You created a contagious site and your article also well i always wait for your blog because I like to get information from your blog, java is a tough language to learn, so we provide help to make assignment of programming language so visit our website to get help.. Programming assignment Help

Earlier, Windows 7 was the most popular choice for laptops and desktops amongst the users globally. If you want to reinstall windows 7 on your system, you need perform some reinstalling steps to make the PC run smoothly and hassle-free with the latest updates. You can reinstall Windows 7 from a recovery image provided by your computer manufacturer.

It has been great to read this article it was quiet to be nice you every detail about the topic Thanks for sharing this article with us.

Restore Computer

If you want to install or reinstall windows 10 | on your PC you need to have a Windows 10 USB Flash Drive or DVD. In case, you have none of them, you cannot install or reinstall Windows 10 on your PC. Initially, you need to boot into the operating system, if you fail to boot, you might not be able to reinstall Windows 10 without compromising your system files.

Nice post!! this is very infromative ,Thanks for share it with us Mobile app development companies in india

Thanks for sharing such an informative post.

If you are willing to learn how to install, update, and set up QuickBooks Database Administrator, you can read on this blog post. We have created this to assist you in finding out what you require to successfully use the QuickBooks Database Server Manager.

For more queries, just give us one perfect call to our experts at QuickBooks Pro Support Number +1-800-969-7370. We assist our clients in their troubled-time and get abrupt assistance avails for 24/7.

Impressive Post!! this is very informative post!!How is second trimester abortion performed

Amazing post !! this is very informative post kids care pediatrics Clifton

Thank you for sharing this! Does your Quicken software are not working as they should be and also throwing some out of the blue errors? If yes, then you need to take Quicken Customer Service and take proper aid from me. I am easily reachable throughout the day and night to conquer customer queries. So, whenever you want to terminate your entire problems allied to your Quicken software as a consistent Quicken tech expert I am always ready to deliver my best. I am always eager to help all deprived one, so that they can use their Quicken software to the fullest visit quicken.com/support.

As slot games have become more and more popular the themes and reel configurations have changed to ensure they stay interesting and entertaining.

scr888

Broadly speaking the more symbols that you spin that match on a payline the more chance you have winning big.

login scr888

In this text, we will review some of the key concepts that you should keep track of while you are playing.

slot scr888

Molitics is India's first Sociopolitical platform pledging the latest and unbiased political information. We deal with political news and videos of all the states of the country. We give you the chance to know your leaders by providing their information and political backgrounds. We also offer a unique platform known as "Raise Your Voice" where common man get chance to raise the issues they are facing.

http://blog.dyscalculia.org/2017/10/british-military-service-and-dyscalculia.html?showComment=1571995147680#c6278581477963632407

Having an Issue regarding your HP Printer? Just contact us through HP Printer Support Phone Number or you can directly visit our website at hp-printer-supports.com and any of your issues are handled as soon as possible.

Here's an easy, step-by-step guide on how to update a Garmin GPS-

Step 1: Connect your device to your computer. Before beginning the update, you'll to connect your Garmin GPS device to a computer. ...

Step 2: Install Garmin Express. ...

Step 3: Access or purchase updates. ...

Step 4: Disconnect your device.

After using all steps, you are unable to fix your issues regarding Garmin GPS Updates, just make a call at our toll-free number +1-844-776-4699 or visit our website gps-map-updates.com

https://gps-map-updates.com/

Nice blog!Thanks for sharing.Keep it up.hd video editing software

very nice!!! This is really good blog information thanks for sharing.

National Scholarship Portal

Thanks for sharing this awesome blog. Keep it up! I really share this with my friends on Nursing Assignment Help.

Here you can find the trusted Top Web Designing Companies in Bangalore. So, that its easy to you find the best web designers from our online directory.

Are you looking for the best dissertation editing service in UK? We are best editing service provider company in UK since 2013.

There are many concepts of democracy. Moreover, different grounds are considered for classifying democratic concepts. It should be noted that the real concepts of democracy are very complex and multifaceted. Therefore, any theoretical construction is largely arbitrary and mainly reflects only the most characteristic features of the proposed democratic model. One of the criteria on the basis of which the concepts of democracy are classified is that which has priority in exercising power: an individual, a social group, or a people as a whole community. You can read the essay on this topic on the website EssayMania

This is very informative post !! thanks for share it with us .we provides best f&b uniform Singapore

Thanks for posting such an interesting post. I truly like it so much.

QuickBooks Error Code Status 3170

QuickBooks File Doctor: Repair Damaged Company File or Network

How to Access the Accounting tools in QuickBooks Desktop Enterprise

Get Instant QuickBooks Support – Call +1(800)880-6389

Get the best Academic Assignment Help by eminent Writers of New Zealand. Hire our professionals to complete your assignment with 100% accuracy and Pocket-friendly price. Boost your grades with New Zealand assignment help. Our Writers are highly professional and they are giving quick and reliable services for all college and university students in New Zealand. Our writers are giving many of the assignment help services like business communication assignment help, MBA Assignment Help, Marketing assignment help, homework help, entrepreneurship assignment help, entrepreneurship homework help, and many more.

Completing an Assignment is very easy when you have proper content and proper guidelines. UAE Assignment help is providing the best assignment help in UAE. UAE Assignment Help have several years of experience in the field of assignment help and we have more than 20000+ happy customers. Our Assignment Helper UAE delivers your content with 100% accuracy. Get a fast response by 24x7 Active customer support. We are providing many of the assignment help services like Accounting Assignment Help, Economics Assignment Help, Finance Assignment Help, HR Assignment Help, Leadership assignment help, Marketing Assignment Help, Instant assignment help MIS Help, Marketing Assignment Help, MBA assignment Help and many more.

Rachat de crédit France.The ideal solution to rebalance your budget. You have taken out too many loans and you are having trouble ...

When you attempt to introduce and setup Norton antivirus program on your PC. That is the reason we givewww.norton.com/setup for those defenseless clients who can't setup Norton. By tapping on this connection and following all the on-screen provoked guidelines as needs be in a correct manner, at that point inside a restricted time of interim you can get this application without hardly lifting a finger. They will proffer you the world-class cure with 100% fulfillment.

norton activation key

Norton Setup Key

norton Antivirus key

norton product key

norton download

norton setup

norton.com/setup

HP printer is very much connected with Windows and Mac Operating framework. The fitting driver establishment on Windows and Mac Operating framework assumes a dominating job for immaculate printing of records and photographs from 123.hp.com/setup 6978 printer. Attempt to introduce the most recent driver programming for continuous printer administration.

123.hp.com/setup

hp setup

123.hp.com/setup 4520

123.hp.com/setup 7640

123.hp.com/setup 5530

123.hp.com/setup 5055

123.hp.com/setup 5660

This is a smart blog. I suggest it. You have a lot of understanding of approximately this issue, and so much passion. You additionally know how to make people rally behind it, glaringly from the responses.

Top SEO Companies in India

Best SEO Companies in India

Top SEO Company in India

Create Your Yahoo Account By Dialing Our Yahoo Phone Number

If you are unable to create your yahoo account then we would recommend you to make a call on our Yahoo Phone Number. They will help you with the proper account creation and handling your mails and other stuff in the yahoo web service. Our Yahoo customer service provider is very hardworking and they are capable enough in completing the task within a dedicated time frame. Our service provider are available all round the clock.

Facing Problem While Accessing Emails Dial Yahoo Phone Number Now

Sometimes email exchanging might be a little irritated. So dial Yahoo Phone Number and get your email query resolved immediately. They will help you in resolving your query within a couple of minutes. You can rely on their services and can even call on odd hours without any hesitation as they are available all round the clock. Every issue related to yahoo’s account can be solved by yahoo customer care service representative.

Having read this I thought it was really informative. I appreciate you finding the time and effort to put this informative article together. I once again find myself personally spending a significant amount of time both reading and posting comments. But so what, it was still worthwhile!

onsite mobile repair bangalore

Good day! I could have sworn I’ve visited this site before but after looking at a few of the posts I realized it’s new to me. Regardless, I’m definitely delighted I stumbled upon it and I’ll be book-marking it and checking back often!

asus display replacement

I needed to thank you for this great read!! I absolutely loved every little bit of it. I have got you bookmarked to look at new things you post…

huawei display replacement

bookmarked!!, I like your website!

vivo display replacement

Very good post. I'm facing some of these issues as well..

lg battery replacement

Way cool! Some very valid points! I appreciate you writing this article and the rest of the website is extremely good.

motorola display replacement

Take Cash App Support To Resolve Login Hurdles From The Root

Are you facing several sorts of problems such as login issues, and many more? For the purpose getting the right aid to get the whole host of problems sorted out in a couple of seconds, you should quickly opt for the Cash App Support service for the correct guidance.

https://www.contact-mail-support.com/phone-number/square-cash

Create A Cash Pin For Your Account Using Cash App Customer Service

Are you one of those cash app users who want to set up cash pin? Do you want to make use of it for the safe transactions? In such a case, you should quickly opt for the Cash App Customer Service and share the problems directly with the professionals regarding the problems you are facing with.

https://yahoo-contact.net/cash-app-customer-service-number/

Amazon Change Password-Get quick and instant help

While using a seller or buyer account, if you find somewhere stuck then a better suggestion is to go for online help. In case you lost of amazon password, then the most common thing you can do is search forAmazon Change Password in google. If searching there you can’t find a relevant answer, then you can connect with our online customer service platform. Our team has in-depth knowledge and extensive experience will guide you with the effective steps. https://attcustomerservicephonenumber.com/blog/how-to-change-amazon-password/

Webstod is a Leading Digital Marketing Company in Faridabad which is helping your brand to cut through the competition with its best SEO Company in Delhi.

As a prominent and provider of best content writing services, we excel at delivering content adhering to global standards.

We are one of the top content writing company which provides world-class and unique content across India. Contact Richa Content Writer for more information.

In the Problem that you are as yet confronting a few issues in your sbcglobal email settings, at that point you can contact our client service they will assist you with getting free of your concern

sbcglobal.net email settings

Get Your Yahoo Main in Outlook | Yahoo Phone Number

Don’t know how to get your Yahoo Mail in Outlook 2010? Well it is pretty easy to do once you contact the knowledgeable team of Yahoo at Yahoo Phone Number. They know the process and instruct you over the phone to perform the process carefully. Also, they help in sorting out some common problems that come in the middle of way.

Detailed Instructions At Yahoo Phone Number to Secure Your Account

Make your Yahoo mail Account hack-proof just by following some simple procedures. Without the help of experts use of Yahoo Account can be tiresome, therefore interact with professionals through Yahoo Phone Number. On the bright side, they already know the ropes and technical know-how to eliminate issues. Get to follow their quality instructions sitting back at your home.

Increase Sales For Your Product| live Chat For Facebook Marketplace

Facebook Marketplace is an effective tool in promoting your business but only when it is used in a right way. Communicating with experts for Facebook is considered as the best option. The professional team will guide you through all the effective strategies, making an attractive page so that every person land on your Facebook page and make regular visits.

Have you got locked out of your Mac, It's easy to panic if you are facing the situation but you can reset it and get back in to your mac account. you have to follow some steps and you will be able to reset mac computer. and you can also contact mac

AOL modern emails related services provider company that provides emails services with full safety and security of your data, you can download AOL desktop gold from the web and can enjoy the services offered by aol gold.

Yahoo Help For password Issues, Hacked Account and Others

Sometimes Yahoo users come across problems while accessing their yahoo mail Account. It can be some delicate problems such as password hacking or any other. In order

to come out of issues, contacting Yahoo Help department is the best idea. The executives

over there are specifically trained and thus help you solve your problems in fast and secure way.

Nice Post! I have fixed all quicken related issues like quicken connectivity problems and duplicate transactions in quicken by our experts.

Quicken error ol-220-a

quicken data file

Quicken error CC-585

quicken error cc-892

Thanks for sharing such decent post you are doing great job by sharing such great info.

How to Update QuickBooks Desktop

Undo or Delete Reconciliation in QuickBooks

Get the right assistance at the right time by our team of highly qualified professionals. Resolve all your issues regarding Antivirus through Norton Antivirus Customer Service Phone Number. You can contact our team anytime; they are available 24/7.

Thanks for sharing such decent post you are doing great job by sharing such great info.

QuickBooks Clean Install Tool for Windows

QuickBooks Has Stopped Working Or Not Responding Error

QuickBooks Error Code 1321

QuickBooks File Doctor: A File Repairing Tool

This is really nice and content. We give best service for Home appliance repair Dubai Abu Dhabi. For more information visit our website.

Antika

Its a great pleasure reading your article post.Its full of information I am looking for and I love to post a comment that "The content of your post is awesome" Great work.

QuickBooks Tool Hub – Fix Common QuickBooks Errors

Steps to Set up a Chart of Accounts in QuickBooks

File Types and Extensions Used by QuickBooks Desktop

Different Windows 10 versions that work best for QuickBooks Desktop

Sering kalah dan jadi pesimis untuk menang di POKER ONLINE? Duit kalah banyak di judi?

Gabung saja di NOVAPOKER.PW betting online dengan WIN RATE TERTINGGI SE-ASIA

Dapatkan berbagai promo menarik dari kami WWW.NOVAPOKER.PW

• MINIMAL DEPOSIT 25.000,-

• BONUS REFERAL 20% / SETIAP MINGGU

• BONUS ROLLINGAN MINGGUAN UP TO 0.5%

Mainkan 9 Permainan dalam 1 User ID

(-) Adu Q

(-) Bandar Poker

(-) Bandar Q

(-) Capsa Susun

(-) Domino QQ

(-) Poker

(-) Sakong

(-) Bandar 66

(-) Perang Baccarat

AYO HUBUNGIN KAMI DI

WhatsApp : +855-877-39-168

LivaChat 24 JAM

#Novapoker #Pokernova #PokerOnline #Domino #Poker #DominoQQ #Domino99 #DominoQiuQiu #AduQ #BandarQ #Sakong #CapsaSusun #Bandar66

#PokerV #pkvgames #JudiOnlineIndonesia #AgenJudiOnline #JudiOnline #AgenTerpercaya #AgenOnlineTerbaik #BandarJudiOnline

#ceweksexy #dancer #cewekmalam #ceweknakal #naked

Awesome blog. Get the amazing tourism at Everest Base Camp Trek and Annapurna Base Camp Trek by Protrek Adventure in Delhi, India.

Everest Base Camp Trek

Milestone251 is the best royal destination for all of those who want to make our stay memorable. Our team is highly professional and we are giving top-quality services at best price. We are working from the past 10+ years in hotel-management. You can book our rooms through both online and offline mode.

Eclectica Restaurant and Cafe is the best choice for you if you love to eat delicious food. We are serving top-quality 100% vegetarian food in our restaurant. Our restaurant is suitable for families, kids and new couples. We have one large roof-top sitting in our restaurant. Our team is highly professional and we are giving top-quality services at pocket-friendly prices.

Mini Palace Samod is the best royal destination for your stay in Jaipur. We are giving elite services for all local as well as international customers. Our team is highly professional and we have more than 10+ years of experience in hotel management. We have more than 50+ rooms in our palace and all rooms are fitted with luxury furniture.

Thanks for sharing such an informative post.

We are going to explain about how to Resolve QuickBooks Desktop Doesn’t Start or Won’t Open Errors, it’s possible causes and the best solutions steps. However, you can take help from QuickBooks support team @ +1(800)-880-6389 to save your time and efforts.

QuickBooks Error Code 392 is one such problem that occurs when updating How to Fix QuickBooks Desktop 2018 the accounts.

Hi am Daniel Ryan i am a technical expert who writes about productivity suites such as technical Antivirus.

I was finding this blog and article for some time now and glad to discover your blog. Extremely decent continue posting like this article.

Visit: norton.com/setup | norton.com/setup | office.com/setup

Get Quicken support phone number then fix the error of quicken software like Unable to install quicken, backup or restore your quicken Data.

Awesome blog, thanks for sharing this information. Sarswati Enterprises is a leading Screw Caps Machinery and Bottle Caps Making Machinery Service provider in delhi, India.

Screw Caps Machinery

A broader perspective Yes i agree with your post. It contains very important data and i use it as my personal use. Your 95% posts are useful for me and thank you so much for sharing this wonderful blog.Get best Dubai Commercial Cleaning Company provides good service of cleaning you visit here site for more details.

Take Thesis Help by PhD Experts for Your Research. Secure Top Grades And Soar High In Your Academic Career With Our Efficient Thesis Writing Assistance

If you are willing to unblock someone on Facebook to restore the old essence of friendship and confused about it’s process. Worry not, we’re here to help you. Follow the steps mentioned in this blog and easily unblock your buddy in no time. “The Query Hub” is a technology blog, that is always there to help you out with technical stuff.

http://thequeryhubcom.mystrikingly.com/blog/how-to-leverage-facebook-for-business-promotion-effectively, read this to leverage facebook

Are you finding any technical error in your Dell product regarding your Dell Products- printer and laptops and so? contact Dell Support Assistant to fix your problems. we are here for your help. call now! we will answer your call within 60 seconds!

STEPS FOR DOWNLOADING NORTON SETUP - NORTON.COM/SETUP

Sign in to your Norton account at norton.com/arrangement player the picked email ID or

parole You should tap on the sign in and finish the main habitats, if you're a

substitution customer Open Norton Setup window and tap on the exchange Norton plan

Unmistakably you should need to enter the Norton Product Key you have gotten with the

making sure about Tap on the concur and move Makes strides toward setting in Norton

Setup When you have downloaded Norton game plan, change it concurring with the

underneath picked rules: In the occasion that you're misuse the Microsoft Edge Browser

or on the other hand IE-Click the Run on the downloaded record and Starting now and

into the not particularly far-cleared, you'll start the foundation For undertaking or

Mozilla Firefox Double-tap the downloaded record to start the foundation For Google

Chrome- Twofold tap the downloaded record and start the foundation When, you run the

program, you should need to take after the on-screen course to finish the establishment

methodology.

visit:

norton.com/setup

Steps to Download and Install Norton Antivirus

Start the approach by registering to Norton. On the off chance that you don't have a Norton account, you will be required to make one. Give your email address and mystery key and you are set.

Right when you sign in, in the 'start' page, click the affiliation that says 'download Norton'.

Before long snap on 'concur and download'. You can pick 'load more' if the thing you need isn't on the quick overview.

The downloaded record will be on the base left corner, you should twofold tap to open it.

You may see the 'client account control' window, click on 'proceed' to continue with the principles.

Exactly when you are finished adhering to the guidelines, your Norton antivirus will have been introduced effectively.

visit:

norton.com/setup

I need this information, thanks for sharing this. Visit Cool Earth HVAC for best quality AC Design Consultants and Evaporative Cooling System in Delhi at affordable prices.

AC Design Consultants in Delhi

Promo 20rb GRATIS yuk buruan hanya di AGEN POKER ONLINE new member diberi 20k skuyy wa :+85510903838

The best article I came across a number of years, write something about it on this page. woz bezwaar

It’s good to see your post again...! Very interesting to read. I really like to read such an informative article. Thanks! keep sharing!

Watching sports is not just a way to pass the time. It's also a way to bond with your friends over your mutual interests. Here are the best free sports streaming sites to try out.

Top 10 Sports Streaming Sites

Best Live Sports Streaming Sites

Free Sports Streaming Sites

It’s good to see your post again...! Very interesting to read. I really like to read such an informative article. Thanks! keep sharing!

Watching sports is not just a way to pass the time. It's also a way to bond with your friends over your mutual interests. Here are the best free sports streaming sites to try out.

Top 10 Sports Streaming Sites

Best Live Sports Streaming Sites

Free Sports Streaming Sites

NICE BLOG ! I LIKE IT. thanks for sharing. Kanhai Jewels is Mumbai based company established in 2001, We are manufacturer and wholesaler of Indian Jewellery and Western trendy jewellery, as well as Exporters of Traditional Indian Jewellery.

website: Artificialjewellery online

To treat bone related disorders, Shoulder replacement is the ultimate solution as stated by Orthopaedics India. Consulting a proper doctor shall finally to get rid of this hindrance. Well, replacing the shoulder may pave the perfect way for you to live without any pain at all.

Best Orthopaedic Doctor in Chennai. Contact ortho specialist via +918101 555 555

The global HVAC market reached a value of about USD 241 billion in 2019. The industry is further expected to grow at a CAGR of 4% in the forecast period of 2020-2025 to reach a value of nearly USD 301 billion by 2025.

The global amnestic disorders therapeutics market is aided by the high diagnosis rate of the amnestic disorders. The market is expected to grow at a CAGR of 5% in the forecast period of 2020-2025.

The global Advanced Driver Assistance Systems (ADAS) Market size was estimated at USD 14.15 billion in 2016. Increasing demand for these systems in compact cars is anticipated to be a key factor driving market growth. Additionally, increasing government regulations for mandatory implementation of ADAS in vehicles is anticipated to grow the demand for these systems over the forecast period.

Sage 50/QuickBooks Support

If you are new user or exiting user of QuickBooks/sage 50 accounting software and looking for the QuickBooks/Sage 50 technical support to fix the QuickBooks/Sage 50 Errors or issues.If yes than you have come to right place as we provide efficient technical support service to customers who show complete faith in us. With our efficient and highly qualified team ,we never disappoint our customers.

The Service we offered are following:

Fix Sage 50 Log Error

Fix Sage 50 Activation Key has Expired Error

Fix Sage 50 Cannot Connect to Your Sage 50 Company Data

Fix Sage 50 Error Connecting Database

Fix Sage Pay Error Code 4020 INVALID

Kolkata indepndent Model girls jenny gupta

escorts in kolkata

kolkata escort

kolkata female escorts

kolkata escorts agency

kolkata escorts services

kolkata escorts service

kolkata escort service

escorts service in kolkata

escort service in kolkata

escort in kolkata

kolkata escorts

nagpur escorts

nagpur escorts Service

nagpur escort Service

nagpur escort

Independent nagpur escorts

nagpur Independent escorts

nagpur Model escorts

nagpur Female escorts

nagpur Housewife escorts

nagpur High Profile escorts

nagpur escorts Agency

ahmedabad escorts

bangalore escorts

escorts service in bangalore

chennai escorts

We are one among the web design and development company. Our objective is to deliver clients with trending solutions that add values to their webpages and applications. We are Professional Website Design Services and we have efficient team of experts who are keen to complete the project within specified period. Our HTML5 developers are up to date in latest designing tools and technologies to build interactive websites. If you are looking for professional web design company also you can hire web designer we are here to help you.

Do you suffering from severe knee pain? Searching for Best Arthroscopic surgeon in Chennai? Now, no need to panic, you can visit dr. vijay balaji, leading ortho specialist in chennai through +918101 555 555 who treats you with modern techniques and relieves you from your knee pain.

I am very glad to here it is very good post. Thanks for sharing

maths sample paper class 10

Thank you for this wonderful information looking forward for more. I am proadvisor in QuickBooks Software. If any problem related QuickBooks software and support. Then visit my Blog:

QuickBooks Web Connector Error QBWC1085

QuickBooks multi-user mode not working error

Install QuickBooks Desktop on Your Computer

Restart your iPad will agree to all the programs running in the background to shut down and obtain a fresh start. Sometimes, this is enough to fix minor software issues which could be the cause why Netflix is not working on your iPad. We provide best and appropriate Services for you. They provide 100% customer satisfaction service. If you have any issues related in Netflix about then just visit Netflix support Number Australia Dial Toll-Free Number 1-800-383-368.

Mercado Latinoamericano de Selladores y Adhesivos| Participación, Tamaño, Precio, Crecimiento, Análisis, Tendencias de la Industria, Informe de Investigación, Perspectivas y Pronóstico 2020-2025

Mercado Latinoamericano de Selladores y Adhesivos

It’s time to activate the Sony crackle channel using sonycrackle.com/activate and pick the entertaining crackle channel shows. Let me suggest an article with the title “How to activate crackle channel using sony crackle com sign in ”. Spend your free time reading this post, and it’s interesting

Writing a blog post is really important for me and other. Thanks for sharing amazing tips. we also provide IT Solution Company in Singapore. for more information visit on our website.

Post is very good its amzazing post I love them thanks for sharing.

visit here- funny cartoon pk

From a huge range of products – carpet, laminate, vinyl, bamboo, hardwood, engineered timber, blinds and shutters – to stellar service and helpful expert advice, it’s all under one roof at Campbelltown Carpet Court in Sydney, Australia.

QuickBooks Install Diagnostic tool helps to resolve multiple bugs and error codes. To get some additional information for the same, you can read this post

quickbooks error 6000 | quickbooks error 80070057 | What is QuickBooks

Superb, I think this is one of the best pieces of information found on this blog. Instasource is a leading Distributor of Air Conditioners for Malls and Video Wall for Education Institutions in India.

Video Wall for Education Institutions in India

Sarkari Exam Provide you all Latest online Form 2020 i.e banking IBPS Po Clerk Sbi Po Clerk, UPSC all Exam schedule Online Central government jobs JIWAJI UNIVERSITY RESULT 2021

If you want to Factory Reset Netgear Router then you are at the right place. We have a dedicated team of experts who are round the clock available to resolve any kind of issue. Dial our toll-free number +1-833-338-1430 or talk to experts via live chat also. For more information about Netgear Router Reset visit our website.

Printing a document or a photograph is normally simple. Sometimes, on your printer, you can show an offline condition and do not print job. The printer returns online, but is printed again via a variety of troublesheet transfers from the printer, because the printer does not have a specific intent.

visit our site for further steps: Fix Printer offline

Thanks for posting this blog. To know any kind of SBCglobal Email Login information visit us.

SBCglobal Login

SBCglobal Email Login

SBCglobal Sign In

SBCglobal.net Login

Your every need is strategically fulfilled by our certified custom paper writers who are hired by us to give the spectacular assistance to all the cheap custom essays writing services students in need of academic support. Our aim is to get you out of the stressful situations and present you with the quality of work that defines our excellence and devotion to every aspect of your essay request.

I am new here. I like your post very much. It is very usefull post for me.

website: In Vitro Diagnostics (IVD) Market

Nice & Informative Blog !

Are you looking for the best ways on QuickBooks Error Code 6129 0? We are here to help you. Call us at 1-855-977-7463 and get the best technical consultation to eliminate QuickBooks Error 8007 at an affordable rate.

As a leading Spare Parts Company in Bangalore, Spares Nucleus is one of the best 2 Wheeler Body Cover in Bangalore at an affordable price.

Spare Parts Company in Bangalore

Hey! Thank you for the informative post it was really good an helpful for me for any QuickBooks Error 108 get instant resolve by expert.

Thanks for sharing this information. I have shared this link with other keep posting such information to provide best in thebestgift4you help online at very affordable prices.

gift for valentine day

Thanks for sharing this information. I have shared this link with other keep posting such information to provide best in thebestgift4you help online at very affordable prices.

gift for valentine day

Hi to everybody! I am a college girl escort service provider in Mumbai. My title is Dipika Chaudhry and I am 26 a long time ancient. I am known as brunette excellence. My skin color that takes after chocolate and my long dark hair standing interior my turban is sufficient to tempt men. My superb physical make-up beneath my hijab is only for my accomplices. I offer College girl escort in Mumbai. if you are interested take our beautiful college call girl service in Mumbai, so visit our site.

pali-hill-escorts l

pali-naka-escorts l

pant-nagar-escorts l

panvel-escorts l

parel-escorts l

parsi-colony-escorts l

poisar-escorts l

poonam-nagar-escorts l

powai-escorts l

Great post...

Nio Stars Technologies LLP is Seo Company in Pune. Search Engine Optimization helps to promote your brand and grow your business on search engine.

Thanks for sharing...

Vision Developers is one of leading Luxury Flats in Hinjewadi. Checkout new property to buy your dream home at the most affordable prices. Click for more info!

Quickbooks is an advanced accounting tool that is used to manage and track records of business data. If you are facing Quickbooks error 3371 code 11118 which can stop you from performing any of the operations. To fix this issue you use the Quickbooks Tool hub. For more information visit the article.

Well done! Great article...

https://www.accountinghub.co/install-and-update-quickbooks-database-server/

Thank you for this wonderful information looking forward for more. I am proadvisor in QuickBooks Software. If any problem related QuickBooks software and support. Then visit my Blog:

QuickBooks Error Code 12002

QuickBooks Crash com error

QuickBooks Unrecoverable Error

Jaipur Escorts

Ajmer Escorts

Alwar Escorts

Banswara Escorts

Baran Escorts

Methanol Production Cost Analysis 2021

Methanol belongs to Methyl group and is used in the Chemical Industry as an important solvent. It is also used to produce formaldehyde, MTBE, acetic acid for the fuel industry. Procurement Resource provides an in-depth cost analysis of Methanol production. The report incorporates the manufacturing process with detailed process and material flow, capital investment, operating costs along with financial expenses and depreciation charges. The study is based on the latest prices and other economic data available. We also offer additional analysis of the report with detailed breakdown of all cost components (Capital Investment Details, Production Cost Details, Economics for another Plant Location, Dynamic Cost Model).

Read the full production cost analysis report of Methanol

Bronopol Production Cost Analysis 2021

Bronopol is a well-known example of an organic compound that is often utilised as an antimicrobial ointment. In appearance, it is a white solid, although some of the commercial samples appear yellow. The first reported synthesis of bronopol happened in the year 1897. The Boots Company PLC invented Bronopol in the early 1960s, and the first applications of this chemical solvent were used as a preservative for pharmaceuticals firm.

Read the full production cost analysis report of Bronopol

Having a low mammalian toxicity at in-use levels as well as having high activity against bacteria, especially Gram-negative species, bronopol became prevalent as a preservative in numerous consumer products like shampoos as well as in cosmetics. It was widely adopted as an antimicrobial in other commercial environments like the oil exploration, paper mills, and production facilities, as well as cooling water disinfection plants. Bronopol is manufactured by the bromination of di(hydroxymethyl)nitromethane that is extracted from nitromethane by a nitroaldol reaction. Bronopol is utilised in consumer products as an active preservative agent, as well as a wide variety of commercial applications.

If you face any issues and errors in your roadrunner mail then instead of getting panic you must consider taking help from the roadrunner technical team through the roadrunner phone number. Skilled technicians will assist you and will drag you out of the situation as soon as possible by guiding you with the most relatable solution. Roadrunner's customer service phone number is available 24/7 for its users, so you can call freely and consult your issue.

how to contact live Roadrunner customer support

Shearing for a nice comment to troubleshoot the computer recognizes Hp Printer Scan, you should follow each method given here carefully. First, check the connection.Download the HP Print and Scan from the download location on your computer. Once HP Print and Scan is open, click Start, and then choose your printer. If your printer is not listed, turn it on and click Retry.

Are you searching for Top 5 fixes for “Fix HP printer printing blank pages error” then give us a visit to our website at HP printer printing blank pages.Several factors may cause the product to print blank pages, such as print settings, low ink, or the product itself Print a nozzle check pattern to see if any of the nozzles are clogged. Clean the print head, if necessary. Make sure the paper size, orientation, and layout settings in your printer software are correct

Exactly, I was looking for such kind of article on the Internet and finally ended up here. Thanks for such kind of spectacular content.

Satta King Result Chart Gali

Wedding hall in Meerut

Website Designer in Meerut

Top 10 CBSE Schools Meerut

Software Development Company In Delhi NCR

Digital Marketing Company in Hapur

Web Development Meerut

Non Availability of Birth Certificate in Kolkata

Website Design in Meerut

Learn the quick and easy steps for <a href="https://hp-printer-assistant.us/how-to-scan-from-hp-printer-to-computer/>How to HP Printer Scan</a> to computer procedure. Make sure you follow the steps in sequence to avoid any interruptions for the same online. If an Adjust Boundaries screen displays, tap Auto or manually adjust the boundaries by tapping and moving the blue dots.

Thanks for Sharing With us. Are you searching for a way to Fix Hp printer long delay before printing then give us a visit to our website at HP customer support before printing.

thanks for the cognitive information, keep on!

ENT Doctor in Meerut

People appear to have overlooked that our awesome quality has continuously been our comprehensiveness, and none more so than the capital of culture Surat Escorts, which takes all sorts and invites them domestically. We might have voted to take off the landmass, but closing our borders and our minds aren’t going to make us incredible once more. On the off chance that anything, it’s ignoring what made us so uncommon and assorted in the first put, the reason why we have extraordinary young ladies like our Surat escorts service on our books nowadays. hnahlan-escorts

hnahthial-escorts

Informes de Expertos (IDE) latest report, titled “Mercado Mexicano de TratamientosAnticaída,Informe y Pronóstico 2021-2026”, it is projected that the Mexican hair loss treatment market size will reach USD XX Billion by2026. The market is growing due to factors like the increasing consciousness among the consumers about their appearances, aging population and the prevalence of hereditary problems. Increased stress levels due to hectic lifestyles, and unhealthy eating habits have also facilitated the demand for hair loss treatments. Other factors like increasing disposable incomes, the rise in research and development activities and technological advances further contribute to the market growth.

Such nice information you have shared with this Blog/Article, thank you for taking the time to share with us. But I am here to inform you that if you want to improve your Quickbooks related errors or interested to know about this software, kindly visit us at "https://qbsenterprisesupport.com/". And Just dial :1-800-761-1787, We will be there to provide you with immediate technical assistance.

An investment in Score offers a high return on investment.

blockchain risk analysis company in india

If you would like to invest in cryptocurrency, Score will be a good choice, since it has a high return.

blockchain risk Management framework in UK

Cash App may be a mobile payment service developed by Square, Inc. that permits users to transfer money to at least one another employing a mobile app. The service is out there within the US and therefore the UK. As of March 21, 2021, the service recorded 36 million active users.

Cash App is active widely across the countries, and billions of transactions happen every day.

Cash App

A great cryptocurrency to invest in is Score, where you will get a high return on investment. Invest in cryptocurrency today, don't delay.

Buy Cryptocurrency in UK Online

A great cryptocurrency to invest in is Score, where you will get a high return on investment. Invest in cryptocurrency today, don't delay.

Blockchain Risk Analysis and Management Company in India

Hi, your content is very unique and informative, Keep spreading this useful information. Meanwhile, Here on Just you can track down

Best trackpant manufacturer in Meerut

Best Sportswear manufacturer in Meerut

Best sportswear brand in Meerut

Best track pant brand in Meerut

Memen

Best clothing brand in Meerut

Canon printers are ideal for every situation wherever you need a document, paper, or photo print or even if you wish to scan, fax, and do more canon will make you learn how to set up a canon printer to get advanced printing features. visit us- ij.start.cannon

You can enhance the retention of your customers and increase conversions through a fast and straightforward website with Full Web Mart.

Best Web Design Company Delhi

Top Web Design Company Near Me

aol login

aol mail login

Black Magic Vashikaran Specialist In India Pankaj Shastri ji is a World Famous Astrologer in India. He is a Solve Your All Life Problem Solution.

QuickBooks Error 15106 occurs when you are not able to open up the update program. quickbooks error 3371 status code 11118

Best Digital Marketing Services in Delhi Ncr can help you increase your brand visibility and business growth. Contact us at 8368096048

Very nice… i really like your blog… Amarnath yatra booking

Business owners who are looking to increase customer satisfaction may find this to be of interest. In , Digital Marketing Services the Design Trip was foundedwas founded in 2010 and they have worked with thousands of various businesses all over india. Here they make sure that the clients are happy and they will ensure that the strategies that they are creating are customized for each and every business.

When your video display ceaselessly displays the “Sleep” message, then certainly is your brother printer stuck in sleep mode. Follow the steps given below to awaken Brother Printer from deep sleep mode.

Thanks a lot for sharing this blog. Your content is amazing. The way you describe is awesome. Hope for getting more content like

this in the future.

QuickBooks Error OLSU-1024

QuickBooks Error 1625

QuickBooks Error 6000 77

QuickBooks Export to Excel not Working

QuickBooks Error 1904

qb install diagnostic toolThank You So Much For providing the important and Knowledgeable information A Diagnostic Tool That QuickBooks Installs solve to do.

Thank for this wonderful blog.

sage 50 vs QuickBooks both are internet bookkeeping programming QuickBooks are basically utilized in little and fair size organizations while the sage was centered around enormous organizations

This is a wonderful article, Given so much info in it, These type of articles keeps the user's interest in the website.

Assignmnet Writing Services

Dr Vijay Balaji also treats patients who need knee replacements and those who have had prior surgery or deformation needing complicated joint replacement operation. His aim is to not only ease his patients of their discomfort but also rehabilitate them to their earlier level of function or better. Call the best orthopedic doctor in Chennai, at 8101 555 555 for any ortho queries

The License Error in Quickbooks After Clone, often known as Quickbooks licensing error, is a rare and complicated problem. An error occurs if the encrypted file contains the product code and thus the registration number.

Thank you for Sharing this information.

An error that users receive while updating QuickBooks Desktop or Payroll i.e., quickbooks payroll support phone number. It’s a common QuickBooks update error that may leave you wondering what went wrong.

The information given in this blog is very useful for us and content in the blog is very informative. In this section, I want to share some useful information.Panda antivirus is well-known for providing top-notch protection for computers against malicious software. Panda Antivirus Error Code 10 is a common issue that many people encounter. The antivirus programs/functions become stuck when this error occurs.

ENT Specialist in Meerut

Kanag Best Ent Centre is located in the heart of the city of Meerut managed by a dynamic professional team from a clinical and non-clinical background. A wide range of medical surgical services for both e.g outpatient and inpatient is offered at the hospital.

This really answered my problem, thank you!

Visit Web

Topcatsnj.org

Information

I really appreciate your efforts for this Blog and wonderful Knowledge. Thank you for sharing this useful content. The information you describe here would be useful. The most popular printers among consumers are Epson printers. For both homes and businesses, it is the most practical printer. Many consumers, however, fail to set up the Epson printer's Wi-Fi due to a lack of experience. If you've recently purchased an Epson printer and need to know How To Connect Your Epson Printer L355 With A Wi-Fi Network?, you've come to the right place. Simply follow the steps to connect your Epson printer to Wi-Fi and start printing important papers.

Customer Helpdesk. Get solutions for technical issues. Follow Easy Steps & Get Quick Response with the support team, Chat link given. The technician will offer an accurate and exact solution for your products and the services.

+1 (800) 577-1869

RESET AN LAPTOP WITH WINDOWS 10

Avg Antivirus Support

There are certainly a lot of details like that to take into consideration. That is a great point to bring up. I offer the thoughts above as general inspiration but clearly there are questions like the one you bring up where the most important thing will be working in honest good faith. I don?t know if best practices have emerged around things like that, but I am sure that your job is clearly identified as a fair game. Both boys and girls feel the impact of just a moment’s pleasure, for the rest of their lives.

Torgi.gov.ru

Information

Click Here

Visit Web

As we all know how much Bill of Sale being used. This document is usually used for sale and purchase between two parties like Buyer & seller.

Bill of sale

Youtube is a vast and popular video streaming network; millions of people use it for study and income because it has turned into a highly beneficial platform for free student courses. Depending on their interests, customers can choose from a variety of films, including instructional videos, technical assistance videos, advertisement videos, movies, and personal use videos. We are a self-contained operation. In the United States, users can call YouTube Phone Number USA: +1-888-226-0555 if they have an issue with the service.

YouTube Phone Number

We, at Easy To Pitch focus on every industry space and our association with 500+ startups proves that. Once associated, we will ensure that your startup becomes pitch-perfect. Not only this we are a team that includes IIMians with various domain expertise.

startup pitch presentation

Nice article you have shared. In this customer records can saved for years. I liked the article you have shared. Hoping to see more such informative article from you. If you are in search of laser treatment then Doctors Hospital is the Best choice. They provide Best laser treatment for piles in Kasaragod.

Hi excellent blog! Does running a blog like this require a massive amount work? I've no knowledge of computer programming however I was hoping to start my own blog in the near future. Anyways, if you have any suggestions or tips for new blog owners please share. I understand this is off topic but I just had to ask. Thanks!

QuickBooks Point of Sale Error 100060

QuickBooks POS Error Code 176103

SEO Expert In Delhi

Thank You for Providing Such insightful information. If someone is looking for the Quickbooks Customer Service Phone Number in Washington DC, US.

If the technique fails, you can capture embedded videos using a browser extension. how to download embedded video with browser extension. Video DownloadHelper, the world's most popular embedded video downloader, is highly recommended. It's compatible with both Chrome and Firefox browsers. It supports the download of HLS streaming videos, Dash videos, flash videos, and more formats.

You may also convert the downloaded videos to any format you like, including AVI, WMV, MP4 and MP3.

Here's how to save an embedded video from the internet.

Step 1: Download Video DownloadHelper from the Chrome Web Store to your device.

Step 2: Go to the video-sharing site and begin watching the video.

Step 3: Select the desired resolution by clicking this option. Then, from the drop-down menu, choose Quick download, Download, or Download & Convert.

Hey! Mind-blowing blog. Keep writing such beautiful blogs. In case you are struggling with issues with QuickBooks software, contact our QuickBooks Experts team. The team, on the other end, will assist you with the best technical services.

Qbdbmgrn Not Running on This Computer Error

Centurylink Email is one of the primary email stages created by Centurylink, with the security and insurance to keep your data free from even a hint of harm. How to Change CenturyLink Email Password Numerous Centurylink clients need to ponder how to make their Centurylink accounts secure. Peruse this article for steps on the best way to make Centurylink's account protected from programmers. Alongside these tips and deceives, clients can exploit Centurylink benefits, through Centurylink experts or specialists. One of the easiest and the safest methods to troubleshoot your CenturyLink Spam Filter Not Working is by deleting the necessary spam and junk mails.

How to Change CenturyLink Email Password | CenturyLink Spam Filter Not Working

https://www.rendertasarim.com

good site u must click

Canada’s high quality of life and boundless job opportunities make it a great country for new graduates. If you’ve just completed your graduation and are considering immigrating to Canada as a recent graduate, here are a few immigration pathways to consider.If you worked part-time during your graduation which can be counted as relevant work experience toward your eligibility, you can apply through Express Entry..

thanks for sharing Website : Aqua Guard Water purifier service in Nagpur

I am very glad to here it is very good post

Website :- seo services in gwalior

Wow Very Nice Information Thanks For Sharing It.

website: naturstein benkeplate

If you need any legal help, visit my page. Abogado Transito Madison VA

if you need any legal help, visit my page. Abogado Trafico Brunswick Va

The occurrence of the QuickBooks Crash Com Error is a widespread issue frequently encountered by users. This error can be especially troublesome, primarily due to the extensive financial data and records integral to the software's operation.

Are you getting QuickBooks Error 15311? This error mostly occurs when the users of the software can’t refresh QuickBooks properly. This error appears through application establishment when QB related software is running while Windows is starting or shutting down or during the installation of QuickBooks accounting software.

Post a Comment